NVIDIA Unveils GR00T N1.7: Open VLA Model for Humanoid Robots

Sonic Intelligence

NVIDIA releases GR00T N1.7, an open VLA model for humanoid robots.

Explain Like I'm Five

"Imagine teaching a robot to do tricky things with its hands, like building a LEGO set. NVIDIA made a special AI brain, called GR00T, that learns by watching thousands of hours of humans doing stuff. This makes the robot much better at using its hands, and it's like we found a secret rule that says the more human videos it watches, the smarter its hands get!"

Deep Intelligence Analysis

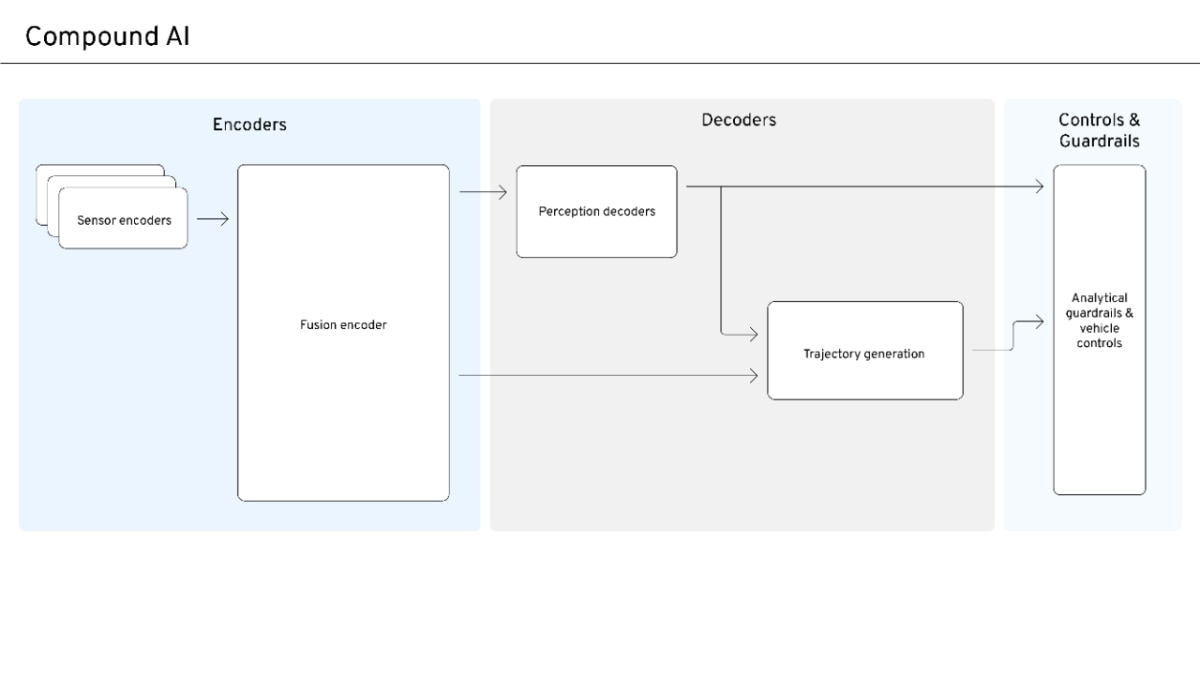

A key technical innovation within GR00T N1.7 is its Action Cascade architecture, which intelligently decouples high-level reasoning from low-level motor control. A System 2 Vision-Language Model (Cosmos-Reason2-2B) handles task decomposition and multi-step reasoning, while a System 1 Diffusion Transformer precisely translates these high-level outputs into real-time motor commands. This dual-system design enhances reliability for complex workflows and enables expanded dexterous manipulation, including finger-level control for intricate tasks like small parts assembly. The model's validation across loco-manipulation, tabletop, and bimanual tasks on various robot platforms underscores its versatility.

Perhaps the most impactful development is the discovery of the first-ever scaling law for robot dexterity, derived from training on over 20,000 hours of human egocentric video. This research demonstrates a predictable, consistent improvement in dexterous manipulation capabilities as more human data is integrated, effectively bypassing the limitations of mass teleoperation. This scaling law provides a clear, data-driven pathway for future robot development, promising increasingly capable and adaptable humanoid robots that can perform contact-rich tasks previously beyond the reach of generalist models. The implications extend beyond specific applications, suggesting a fundamental shift in how robotic intelligence is acquired and scaled.

Visual Intelligence

flowchart LR

A["RGB Image Frames"] --> B["System 2 VLM"]

C["Language Instruction"] --> B

D["Robot Proprioceptive State"] --> E["System 1 DiT"]

B -- "High-Level Action Tokens" --> E

E -- "Continuous Action Vectors" --> F["Robot Actuation"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The release of a commercially licensed, open-source foundational VLA model like GR00T N1.7, coupled with a proven dexterity scaling law, marks a critical inflection point for humanoid robotics. This accelerates development, lowers entry barriers, and promises more capable, adaptable robots for diverse industrial and service applications.

Key Details

- GR00T N1.7 is a 3B-parameter Vision-Language-Action (VLA) model for humanoid robots.

- It is open-source and commercially licensed, available on Hugging Face and GitHub.

- The model employs an Action Cascade architecture, separating high-level reasoning (System 2 VLM) from low-level motor control (System 1 Diffusion Transformer).

- GR00T N1.7 was trained on 20,854 hours of human egocentric video, a significant increase from previous robot teleoperation data.

- NVIDIA discovered the first-ever scaling law for robot dexterity, showing predictable improvement with more human egocentric data.

Optimistic Outlook

GR00T N1.7's open availability and commercial license will democratize advanced humanoid robotics, fostering rapid innovation and deployment across manufacturing, logistics, and even home environments. The dexterity scaling law suggests a clear path to increasingly capable robots, potentially leading to a future where humanoids seamlessly integrate into complex, unstructured tasks.

Pessimistic Outlook

While promising, the widespread deployment of highly dexterous, reasoning humanoid robots raises concerns about job displacement and the ethical implications of autonomous agents. The complexity of real-world environments may still present unforeseen challenges, and the potential for misuse or unintended consequences of such powerful general-purpose robots requires careful consideration and robust safety protocols.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.