AI's Political Leanings: Study Debunked on Moderating Extremes

Sonic Intelligence

The Gist

A widely shared study claiming AI moderates political extremes faces significant methodological scrutiny.

Explain Like I'm Five

"Imagine a robot that talks to people about politics. Some people think it makes everyone think more in the middle. But actually, the robot often just makes people lean more left or more right, depending on which robot they talk to. And the way they figured this out might not be how real people actually change their minds."

Deep Intelligence Analysis

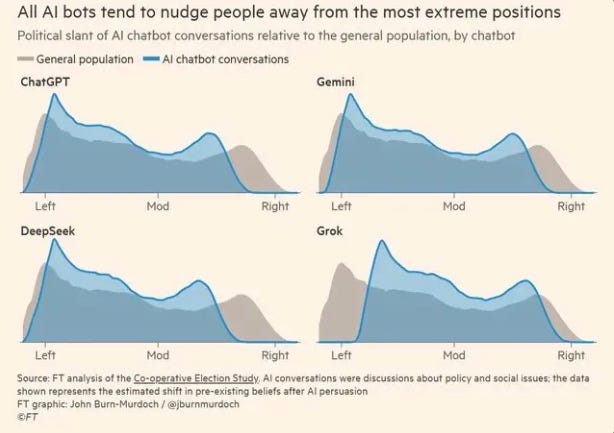

Contextually, the analysis highlights a critical issue in AI development and deployment: inherent bias. Models like ChatGPT, Gemini, and Deepseek are noted to nudge users leftward, while Grok exhibits a rightward pull. This divergence underscores that AI, far from being a neutral arbiter, often mirrors the ideological slant of its vast training data, which is predominantly progressive. The challenge is compounded by the social media environment, where simplified graphs and paywalled original research contribute to the rapid spread of potentially misleading conclusions, creating an ironic situation where a study about moderation inadvertently fuels misinformation.

Looking forward, the implications are substantial for the integrity of public discourse and the future of AI governance. The tendency for users to gravitate towards AI tools that confirm their existing biases, coupled with the models' inherent leanings, suggests a potential for AI to deepen political divides rather than bridge them. This necessitates a more rigorous approach to AI development, emphasizing transparency in training data, robust bias mitigation strategies, and clearer communication of research methodologies to prevent the weaponization of AI in political contexts. The debate moves beyond simple moderation to the more complex question of how AI can be designed to foster genuinely diverse and informed perspectives.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

The analysis challenges a prevalent narrative about AI's role in political discourse, highlighting the risks of misinterpreting research. It underscores the inherent biases within AI models and the potential for these biases to reinforce, rather than moderate, existing political leanings, especially when study methodologies are oversimplified in public reception.

Read Full Story on PhilosophybearKey Details

- ● The study simulated conversations between respondents and AI, not real interactions.

- ● It assumed 80% original opinion and 20% AI's position for the outcome.

- ● ChatGPT, Gemini, and Deepseek models were observed to pull users leftward.

- ● Grok, conversely, was noted to pull users rightward.

- ● The original research article is behind a paywall, limiting public access to methodology.

Optimistic Outlook

Increased scrutiny of AI's societal impact, particularly in political contexts, fosters more robust research and critical public engagement. This critical review encourages deeper methodological transparency and a more nuanced understanding of how AI truly influences human opinion, leading to better-designed, less biased AI systems in the future.

Pessimistic Outlook

The rapid spread of oversimplified or misinterpreted AI research, especially when original sources are paywalled, can fuel misinformation. Users' tendency to select AI models aligning with their existing views risks creating echo chambers, potentially exacerbating political polarization rather than moderating it, despite claims to the contrary.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

OpenAI Advocates Four-Day Work Week for AI Era Adaptation

OpenAI proposes a four-day work week to adapt to AI-driven labor shifts.

Economists Urge 'Manhattan Project' for AI Job Impact Data

Current AI job impact predictions are flawed, necessitating new data collection.

AI's Homogenizing Effect: Eroding Original Thought in Higher Education

AI chatbots are homogenizing student expression and critical thinking in university settings.

Toronto Neighborhood Debates AI Surveillance for 'Virtual Gated Community'

Toronto's Rosedale neighborhood debates AI surveillance for a 'virtual gated community'.

Google's AI Overviews Exhibits 10% Error Rate, Generating Millions of Daily Misinformation Instances

Google's AI Overviews shows 10% inaccuracy, creating millions of daily errors.

Uber Expands AWS AI Chip Adoption, Signaling Cloud Infrastructure Shift

Uber expands AWS cloud contract, adopting Graviton and trialing Trainium3 AI chips.