AI Deployed in US Strikes on Iran, Raising Ethical and Security Concerns

Sonic Intelligence

AI is now actively used in military targeting, sparking significant ethical and security debates.

Explain Like I'm Five

"Imagine smart computer brains (AI) are helping soldiers decide who to aim at in a faraway conflict. Other smart computer brains can also figure out who you are online even if you try to hide. And a popular app decided not to put a secret lock on your messages, saying it's safer that way. It's all about how powerful computers are changing how we fight, how we stay private, and how we live."

Deep Intelligence Analysis

This integration of AI into military operations is further complicated by the evolving landscape of drone technology. Iran's Shahed drones, characterized by their low manufacturing cost and high interception expense, exemplify a new paradigm in asymmetric warfare. The US response, including manufacturing copies of these drones, highlights a rapid technological arms race where innovation in AI and drone capabilities directly influences geopolitical power dynamics and military effectiveness.

Beyond the battlefield, AI's capabilities are reshaping digital privacy and infrastructure. Large Language Models (LLMs) have demonstrated an alarming capacity to unmask pseudonymous users at a speed and scale far exceeding human investigators. This capability presents a significant threat to online anonymity, potentially impacting freedom of expression, whistleblowing, and privacy rights globally. Concurrently, the burgeoning demand for data centers is creating political friction, as seen in North Carolina, where calls for moratoriums reflect concerns over energy consumption and environmental impact, pushing innovators to explore alternative designs like floating offshore wind turbine integration.

In the realm of social media, TikTok's decision to forgo end-to-end encryption, citing user safety, sets it apart from many rival platforms. While this stance may appease law enforcement and parents, it opens avenues for surveillance and potential data exploitation, raising critical questions about user data protection and the balance between security and privacy in a global digital ecosystem. These diverse applications of AI and related technologies collectively demand urgent attention from policymakers, ethicists, and the public to establish robust frameworks that ensure responsible development and deployment.

Impact Assessment

The integration of AI into military operations fundamentally alters warfare dynamics, posing complex ethical questions about autonomous targeting and accountability. Simultaneously, advancements in LLMs threaten digital anonymity, while major platforms like TikTok navigate user privacy versus security, reshaping the digital landscape.

Key Details

- Anthropic's Claude AI assists US military in identifying and prioritizing targets for strikes on Iran.

- OpenAI is actively seeking a contract with NATO.

- Iran's Shahed drones are characterized by low manufacturing cost and high interception expense.

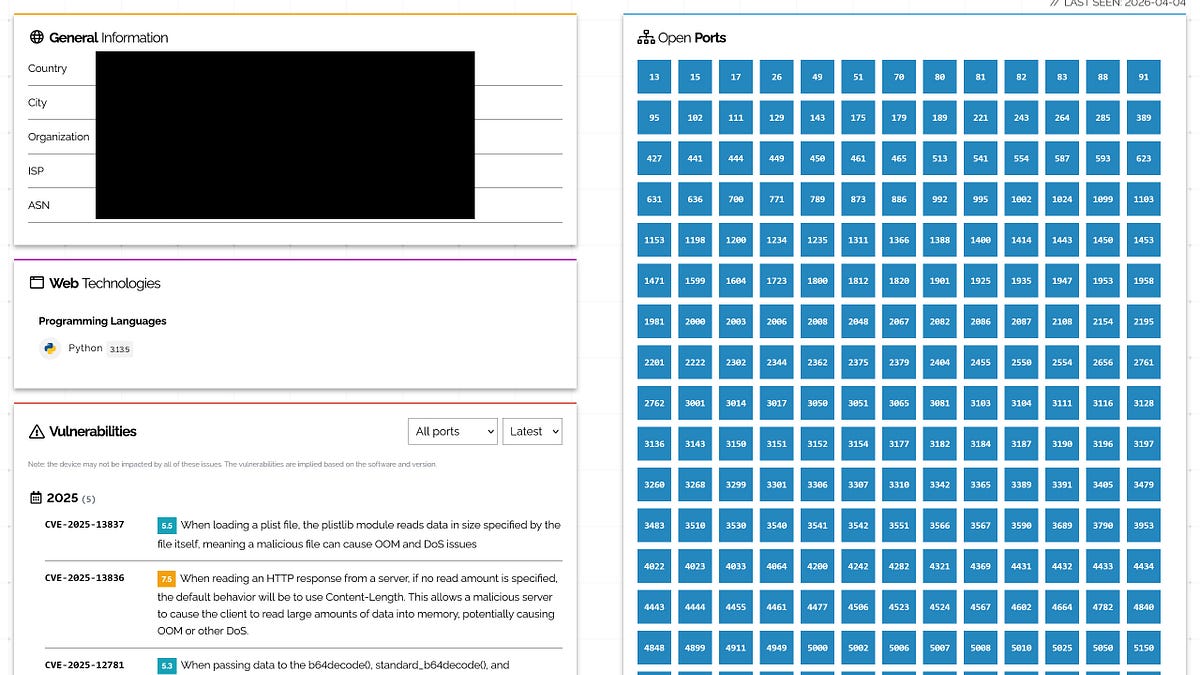

- Large Language Models (LLMs) can de-anonymize pseudonymous users with unprecedented speed and scale.

- TikTok has opted against implementing end-to-end encryption, citing user safety concerns.

Optimistic Outlook

AI's application in defense could enhance precision and reduce collateral damage by optimizing target identification, potentially leading to more efficient and contained military engagements. Furthermore, advanced analytics from LLMs could bolster cybersecurity efforts, identifying malicious actors more effectively and safeguarding digital infrastructure.

Pessimistic Outlook

The deployment of AI in lethal autonomous weapons systems raises profound ethical dilemmas regarding human oversight and the potential for algorithmic bias in targeting. The ability of LLMs to unmask pseudonymous users also presents significant privacy risks, potentially enabling mass surveillance and chilling free speech online.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.