NVIDIA Boosts RL Training Throughput with End-to-End FP8 Precision

Sonic Intelligence

NVIDIA enhances reinforcement learning training for LLMs using end-to-end FP8 precision.

Explain Like I'm Five

"Imagine teaching a super-smart computer (an LLM) to think and solve problems, not just write stories. This teaching process, called Reinforcement Learning, is like playing a game where the computer learns from its mistakes. NVIDIA found a way to make this learning much faster by using a special, smaller number format (FP8). This means the computer can learn more quickly and become even smarter, even if it's a bit trickier to make sure it's always perfectly accurate."

Deep Intelligence Analysis

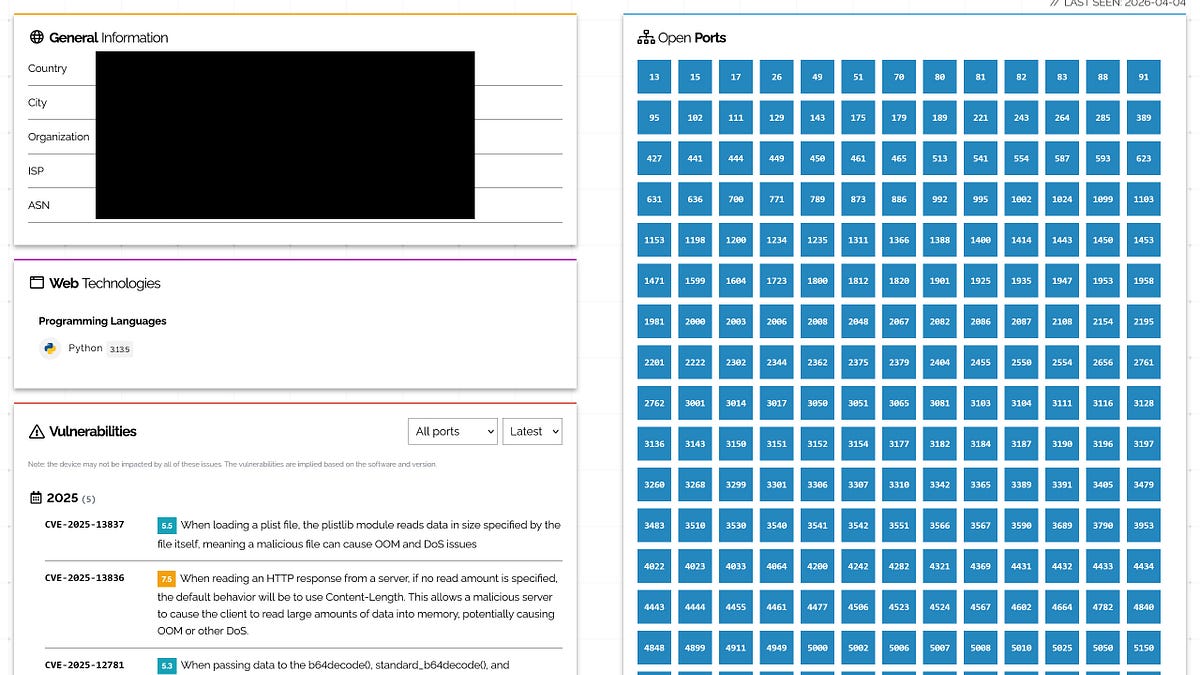

Technically, FP8 precision offers a compelling advantage, delivering up to 2x peak throughput compared to BF16 math for linear layers, which are foundational components in neural networks. This performance boost is complemented by memory bandwidth improvements due to fewer bytes per parameter. NVIDIA's NeMo RL, an open-source library, is central to implementing these optimizations, providing a framework for developers to harness FP8. A key challenge in low-precision RL is numerical disagreement between separate engines used for rollouts (e.g., vLLM) and training (e.g., NVIDIA Megatron Core). The "final recipe" of end-to-end FP8, applied in both generation and training engines, has been shown to consistently reduce this numerical divergence compared to using FP8 only during generation.

The implications of this advancement are profound for the future of AI. Faster and more memory-efficient RL training will accelerate the development cycle for increasingly sophisticated LLMs, enabling them to tackle more complex, real-world problems requiring deep reasoning. This could unlock new applications in areas like autonomous decision-making, advanced scientific discovery, and highly nuanced human-AI interaction. However, the ongoing challenge will be to meticulously balance the performance gains of low-precision training with the need for numerical stability and accuracy, especially as models are deployed in safety-critical or high-stakes environments. The continued refinement of techniques like importance sampling will be vital in mitigating these inherent trade-offs.

Visual Intelligence

flowchart LR A["LLM Reasoning"] --> B["Reinforcement Learning"]; B --> C["Generation Phase"]; B --> D["Training Phase"]; C & D --> E["Low Precision FP8"]; E --> F["2x Throughput"]; E --> G["Reduce Disagreement"]; G --> H["NVIDIA NeMo RL"];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

As LLMs evolve towards sophisticated reasoning, efficient reinforcement learning (RL) becomes critical. NVIDIA's adoption of FP8 precision across the entire RL pipeline significantly boosts training throughput and memory efficiency, accelerating the development of more capable and complex AI models.

Key Details

- Reinforcement learning (RL) is central to LLMs transitioning from text generation to complex reasoning.

- RL training involves a latency-stringent generation phase and a high-throughput training phase.

- FP8 precision offers 2x peak throughput compared to BF16 for linear layers.

- NVIDIA NeMo RL is an open-source library for speeding up RL workloads.

- End-to-end FP8 (both generation and training) reduces numerical disagreement compared to FP8 only in generation.

Optimistic Outlook

This advancement will enable faster iteration and training of reasoning-grade LLMs, leading to more intelligent and adaptable AI agents. The performance gains and memory savings could democratize access to advanced RL training, fostering innovation across various AI applications and pushing the boundaries of what LLMs can achieve.

Pessimistic Outlook

While FP8 precision offers significant speedups, the inherent numerical differences introduced by lower precision, even when mitigated, could pose challenges for maintaining absolute accuracy in highly sensitive applications. The reliance on NVIDIA's specific frameworks and hardware might also limit broader adoption or create dependencies for developers.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.