Anthropic's Claude Cowork Now Supports Third-Party LLMs, Including Local and Open-Weight Models

Sonic Intelligence

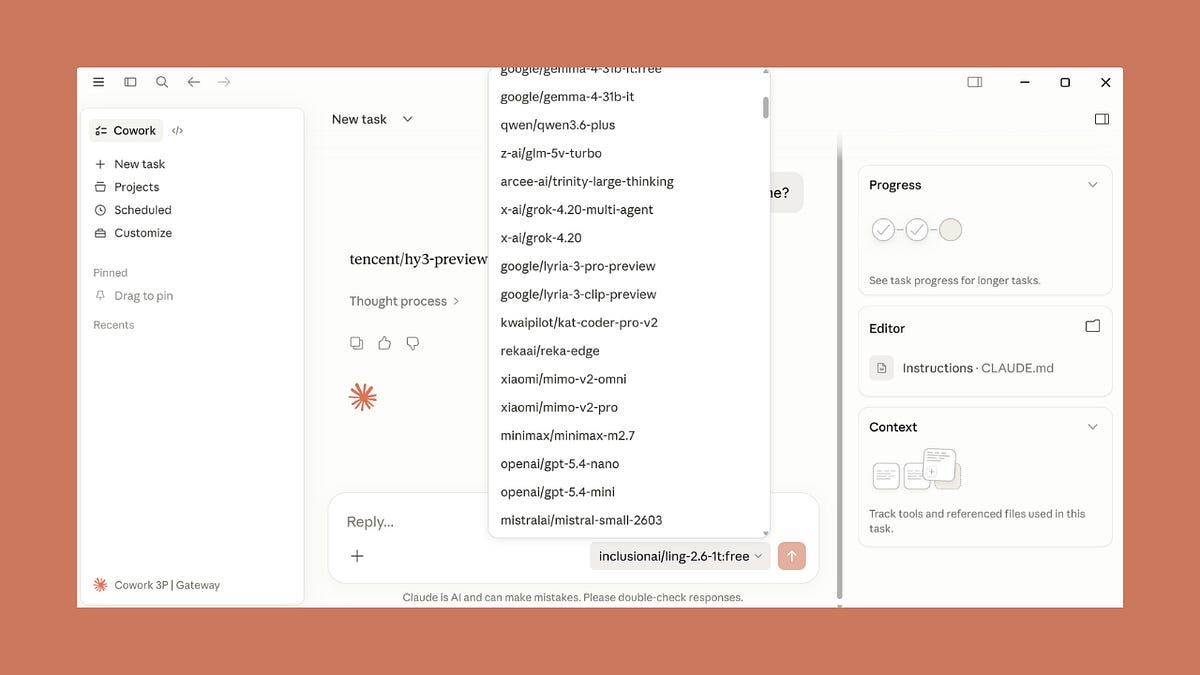

Anthropic's Claude Cowork now quietly supports various third-party and local LLMs.

Explain Like I'm Five

"Imagine you have a super smart robot helper named Claude. Before, Claude could only use his own brain. Now, someone secretly gave Claude a new trick: he can borrow brains from other smart robots like OpenAI or even brains you have on your own computer! This means Claude can do more things and work with your favorite smart robot brain."

Deep Intelligence Analysis

The technical implementation supports integration through enterprise gateways like Amazon Bedrock, Google Cloud Vertex AI, and Azure AI Foundry, alongside direct connections to services like OpenRouter. This broad compatibility addresses critical enterprise needs, particularly for regulated industries or organizations with strict data residency and security requirements, allowing them to leverage Claude's agentic capabilities while maintaining their existing cloud provider and compliance boundaries. The inclusion of full admin controls, such as per-user token caps and OpenTelemetry integration, further underscores its enterprise readiness, despite being labeled a "research preview."

The implications are significant for the broader AI ecosystem. By enabling interoperability, Anthropic is implicitly acknowledging the growing trend towards specialized models and the need for platforms that can orchestrate them effectively. This could accelerate the adoption of agentic AI systems by reducing the friction associated with model selection and deployment. It also intensifies competition among foundation model providers, as the value proposition shifts from raw model capability to the robustness and flexibility of the surrounding tooling and orchestration layers. This development could catalyze a future where AI platforms are judged less on their proprietary models and more on their ability to seamlessly integrate and manage a diverse portfolio of AI capabilities.

Visual Intelligence

flowchart LR

A["Claude Cowork/Code"] --> B["Third-Party Inference Config"]

B --> C{"LLM Source?"}

C -- "OpenRouter" --> D["OpenRouter API"]

C -- "Enterprise Gateway" --> E["Bedrock/Vertex/Foundry"]

C -- "Local Model" --> F["OpenAI-Compatible Proxy"]

D --> G["Any LLM"]

E --> G

F --> G

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This quiet release by Anthropic significantly expands the utility and flexibility of Claude Cowork, allowing users and enterprises to leverage its agentic capabilities with their preferred or compliant LLMs. It signals a move towards model-agnostic AI orchestration platforms, reducing vendor lock-in and increasing customization.

Key Details

- Claude Cowork and Code in Claude Desktop can now run against OpenAI, Gemma, Kimi K2, and local models.

- Integration is possible via OpenRouter, enterprise gateways (Amazon Bedrock, Google Cloud Vertex AI, Azure AI Foundry).

- Anthropic released this capability without an official announcement or blog post.

- All admin controls (token caps, MCP allowlist, OpenTelemetry) are available across individual and enterprise paths.

- The feature is described as a 'research preview'.

Optimistic Outlook

This development empowers users with unprecedented choice and control over their AI deployments, fostering innovation by allowing experimentation with diverse models. Enterprises can integrate Claude's agentic framework within existing compliance boundaries, accelerating adoption and custom AI solution development.

Pessimistic Outlook

The lack of an official announcement might indicate a cautious approach, potentially due to unresolved issues or a desire to manage expectations for a 'research preview.' Relying on unofficial integrations like OpenRouter, while functional, could pose long-term support or security challenges if not formally endorsed.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.