CTX Introduces Cognitive Version Control for AI Agent Continuity and Explainability

Sonic Intelligence

The Gist

CTX provides persistent cognitive memory for AI agents, ensuring continuity and explainability.

Explain Like I'm Five

"Imagine an AI robot that helps you with a big project. Normally, if you stop talking to it, it forgets everything you just discussed and has to start from scratch next time. CTX is like giving that robot a special notebook where it writes down all its thoughts, plans, and decisions, step-by-step. So, when you come back, it can just open its notebook and continue exactly where it left off, and even tell you why it did what it did!"

Deep Intelligence Analysis

The core problem CTX addresses is the inherent context loss in current AI agent designs. Without a structured memory, agents repeatedly re-investigate prior work, re-read documents, and re-infer decisions, leading to inefficiencies and potential contradictions. CTX mitigates this by allowing agents to "commit" cognitive progress, enabling them to resume work seamlessly from the last useful state. This capability is not merely about memory recall; it's about reconstructing the entire cognitive lineage of an idea, including the evidence considered and decisions made. This structured preservation also holds significant value for future model training, offering rich, step-by-step reasoning data that can improve the robustness and logical consistency of subsequent AI iterations.

The strategic implications for AI agent development are profound. CTX facilitates the creation of more robust, auditable, and collaborative AI systems, extending its utility beyond coding workflows to cognitive planning, research, and operational continuity. By providing a mechanism for agents to explain their rationale based on preserved decisions and evidence, it enhances transparency, a critical factor for regulatory compliance and user trust. This persistent cognitive layer is a foundational component for scaling autonomous AI, allowing for more sophisticated task decomposition, error recovery, and human-AI teaming, ultimately accelerating the deployment of AI agents into mission-critical roles where continuity and explainability are non-negotiable requirements.

Transparency: This analysis was generated by an AI model (Gemini 2.5 Flash) based on the provided source material. No external information was used.

Visual Intelligence

flowchart LR

A["Agent Task Start"] --> B{"Need Context?"}

B -- "No" --> C["Continue Work"]

B -- "Yes (No CTX)" --> D["Re-search Context"]

D --> E["Re-infer Decisions"]

E --> F["Restart Progress"]

B -- "Yes (With CTX)" --> G["Read Cognitive Line"]

G --> H["Resume from Last State"]

H --> C

C --> I["Agent Task End"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The introduction of a robust cognitive memory layer like CTX addresses a fundamental limitation of current AI agents: their lack of persistent, structured working memory. This innovation is crucial for developing truly autonomous and reliable agents, enabling them to maintain continuity, explain decisions, and learn more effectively over extended operations, moving beyond ephemeral chat-based interactions.

Read Full Story on GitHubKey Details

- ● CTX functions as a Cognitive Version Control System for AI agents.

- ● It creates a persistent cognitive layer, storing structured reasoning artifacts.

- ● Preserves goals, tasks, hypotheses, evidence, decisions, and conclusions as durable state.

- ● Enables agents to resume work with continuity, avoiding repeated reconstruction of prior reasoning.

- ● Facilitates tracking and explaining AI decisions step-by-step.

Optimistic Outlook

CTX could unlock a new generation of highly capable and reliable AI agents that can tackle complex, long-running tasks without losing context or contradicting prior work. This enhanced continuity and explainability will accelerate AI development, improve debugging, and build greater trust in autonomous systems across various industries.

Pessimistic Outlook

While promising, the overhead of managing and versioning cognitive states might introduce computational complexity or new failure modes if not implemented robustly. Over-reliance on such systems could also obscure the underlying LLM's reasoning process, creating a "black box" at a higher cognitive layer, potentially complicating auditing and human oversight.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Agentic AI Security: Sandboxes and Worktrees for 2026 Code Generation

A developer outlines a secure, efficient setup for agentic AI code generation.

Operational Readiness Criteria for Tool-Using LLM Agents Unveiled

A new framework defines operational readiness for tool-using LLM agents.

Claude Code Architecture Reveals Agentic AI Design Principles

Claude Code's architecture offers deep insights into AI agent design.

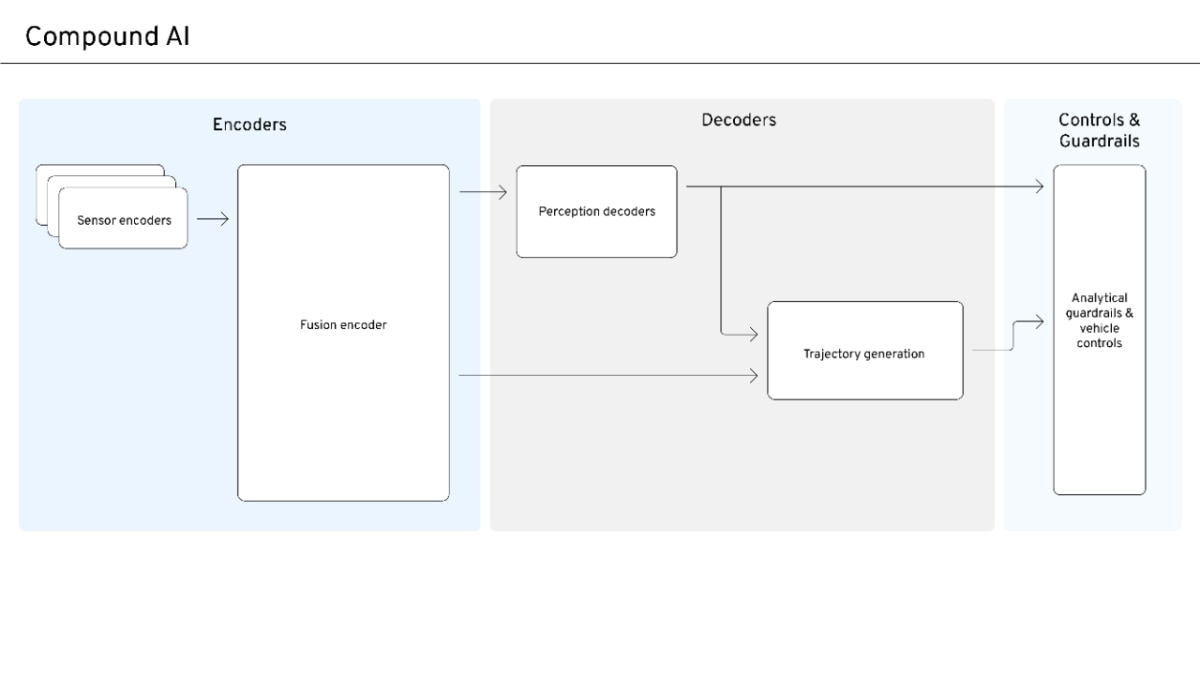

Compound AI Emerges for Safe, Scalable Autonomous Systems

Compound AI balances data-driven scale with safety and interpretability for autonomous systems.

AI Disruption Narrative Shifts from Universal Win to Competitive Reality

Early AI market optimism is yielding to a more nuanced, competitive reality.

NVIDIA's TensorRT LLM Accelerates AI Inference with Specialized Optimizations

TensorRT LLM optimizes LLM and visual generation model inference.