KarnEvil9 Unveils Deterministic AI Agent Runtime Based on Google DeepMind Framework

Sonic Intelligence

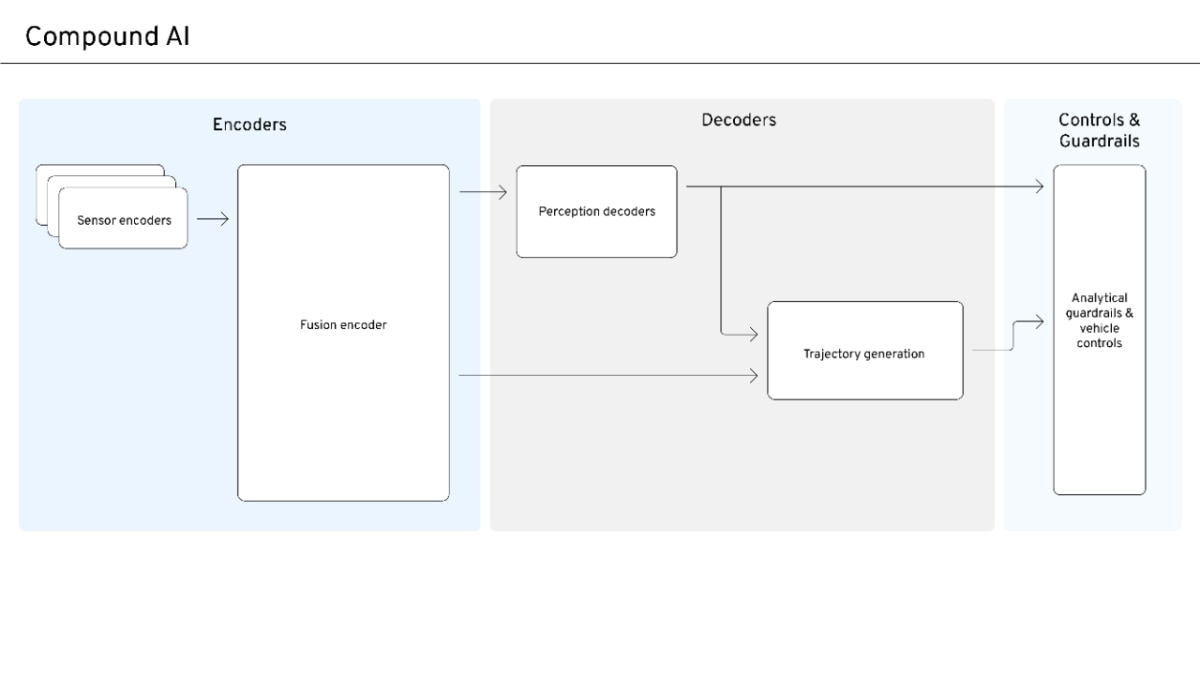

KarnEvil9 is an open-source, deterministic AI agent runtime implementing Google DeepMind's delegation framework.

Explain Like I'm Five

"Imagine you have a super smart robot that needs to do many jobs, and you want to make sure it always does them exactly right and safely. KarnEvil9 is like a special rulebook and diary for the robot that makes sure every step is planned, checked, and recorded, so you can always see what happened and why."

Deep Intelligence Analysis

Impact Assessment

KarnEvil9 introduces a new paradigm for AI agent accountability and safety by providing a deterministic, auditable runtime. Its direct implementation of a leading academic framework offers a robust foundation for building reliable multi-agent systems, crucial for high-stakes applications where transparency and control are paramount.

Key Details

- First public implementation of Google DeepMind's Intelligent AI Delegation framework (Tomasev et al., 2026).

- Converts natural language tasks into structured execution plans using TypeScript.

- Features a tamper-evident SHA-256 hash-chain journal for all events.

- Incorporates nine safety mechanisms for multi-agent delegation, including escrow bonds and outcome verification.

- Requires Node.js >= 20 and pnpm >= 9.15 for operation.

Optimistic Outlook

This runtime could significantly advance the development of trustworthy AI agents, enabling complex, multi-step tasks with built-in safety and auditability. Its domain-ignorant governance model promises broad applicability across various industries, accelerating the adoption of autonomous AI systems.

Pessimistic Outlook

The complexity of managing nine safety mechanisms and ensuring their effective configuration might pose a barrier to entry for developers. The initial "cognitive friction" observed in the Zork experiment suggests that fine-tuning governance for practical, real-world scenarios will be a significant challenge.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.