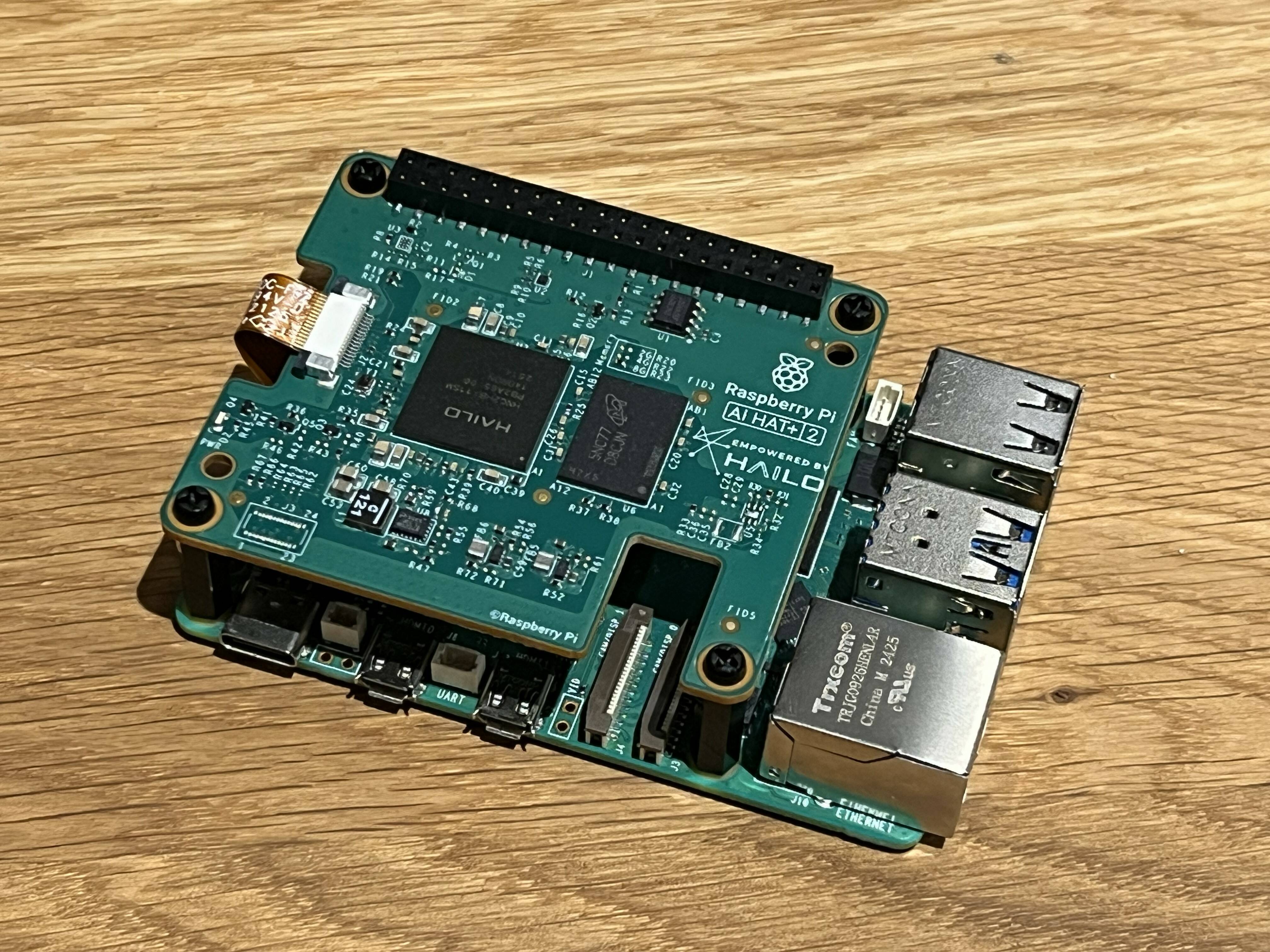

Local AI Coding Agents Emerge as Cost-Saving Alternative to Cloud LLMs

Sonic Intelligence

Local AI coding agents offer a cost-effective alternative to expensive cloud LLMs.

Explain Like I'm Five

"Imagine you have a super smart helper for writing computer code. Usually, you have to pay a lot of money every time you ask it a question because it lives on the internet. But now, there are new, smaller helpers you can put right on your own computer, and once you have them, they're free to use! This is great for saving money, even if they're a little slower."

Deep Intelligence Analysis

This shift is driven by both cost pressures and significant technical advancements. Recent improvements in model architectures, such as mixture-of-experts, and more sophisticated agent harnesses have dramatically enhanced the capabilities of smaller, local models. These advancements include better 'reasoning' capabilities, allowing models to compensate for size with longer processing, and vastly improved function and tool calling. This enables local LLMs to interact effectively with codebases, shell environments, and the web, making them viable alternatives for tasks like code completion and generation, which were previously dominated by larger frontier models.

The implications are far-reaching. Developers can now 'roll their own' AI coding assistants, bypassing token limits and high subscription fees. This fosters greater autonomy, reduces dependency on specific vendors, and potentially enhances privacy by keeping sensitive code local. While local models may still be slower or less capable for highly complex tasks compared to their cloud counterparts, their 'free' operational cost (post-hardware investment) presents a compelling value proposition. This trend suggests a future where a hybrid approach, combining local and cloud AI, becomes standard, with local agents handling routine tasks and cloud services reserved for specialized, high-demand applications. The ecosystem of inference engines like Llama.cpp, LM Studio, Ollama, and MLX will continue to evolve, making local AI deployment increasingly accessible.

Visual Intelligence

flowchart LR

A["High Cloud LLM Costs"] --> B["Developer Demand Local AI"]

C["New Efficient Local LLMs"] --> D["Improved Agent Frameworks"]

B --> E["Local AI Coding Agents"]

D --> E

E --> F["Cost Savings"]

E --> G["Increased Autonomy"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The shift to usage-based pricing for major cloud-based AI coding assistants is increasing costs for developers, driving demand for local, open-source alternatives. This trend democratizes access to advanced AI coding capabilities, reducing reliance on expensive proprietary services and fostering innovation in local AI deployment.

Key Details

- Anthropic is considering dropping Claude Code from affordable plans.

- Microsoft moved GitHub Copilot to a purely usage-based model.

- Alibaba released Qwen3.6-27B, a model runnable on 32 GB M-series Mac or 24 GB GPU.

- Llama.cpp, LM Studio, Ollama, and MLX are inference engines for local LLMs.

- Older M-series Macs may struggle with large context lengths for agentic coding.

Optimistic Outlook

The rise of efficient local LLMs and improved agent frameworks empowers individual developers and small teams to leverage AI coding assistants without prohibitive costs. This could accelerate innovation, enable privacy-centric development, and foster a more diverse ecosystem of AI-powered tools.

Pessimistic Outlook

While cost-effective, local models may be slower and less capable than frontier cloud models, potentially frustrating users and limiting complex tasks. The hardware requirements for running these models locally could also create a barrier to entry for developers without powerful machines, exacerbating digital divides.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.