Optimizing Memory for Large AI Models on NVIDIA Jetson Edge Devices

Sonic Intelligence

NVIDIA outlines strategies to optimize memory for large AI models on Jetson edge devices.

Explain Like I'm Five

"Imagine you have a tiny computer, like the brain of a smart robot. It needs to run very big smart programs (AI models), but it doesn't have much space in its memory. NVIDIA is showing developers tricks to make these big programs fit better and run faster on these small computers, so robots can do more amazing things without needing bigger, more expensive parts."

Deep Intelligence Analysis

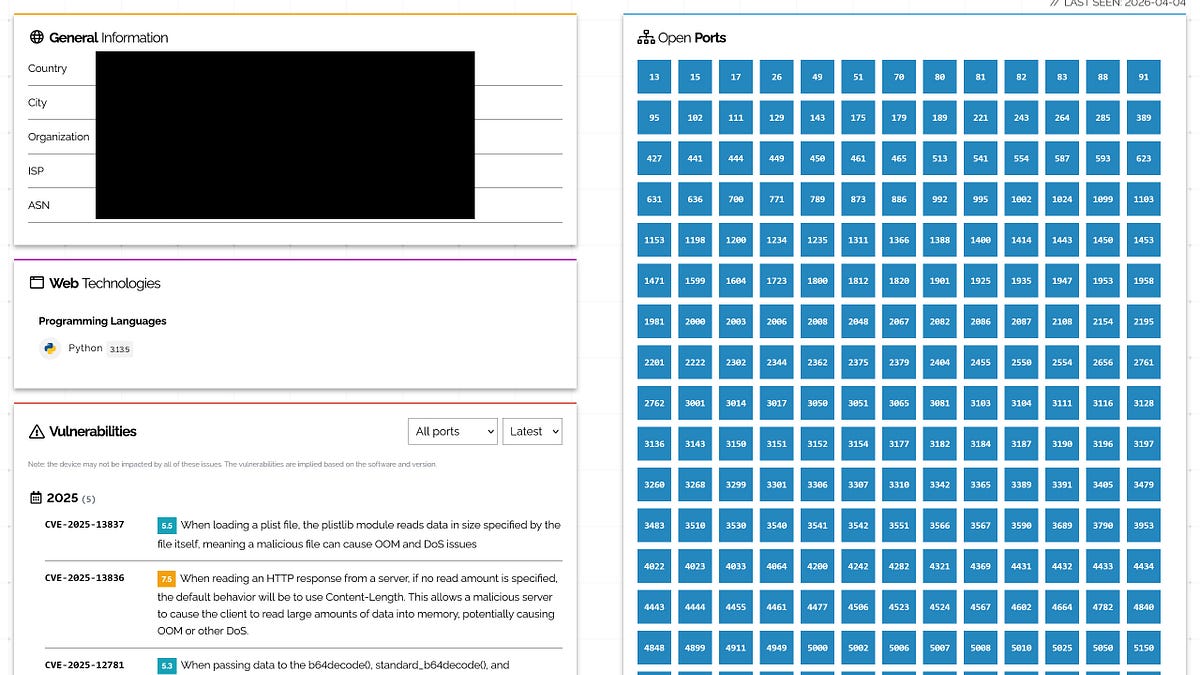

Efficient memory utilization is paramount for edge AI, where applications often involve multiple concurrent pipelines such as detection, tracking, and segmentation, all operating under strict power and thermal envelopes. The outlined optimization strategies span foundational layers like the Jetson Board Support Package (BSP) and JetPack SDK, extending through inference pipelines, frameworks, and quantization techniques. A concrete example includes the ability to reclaim up to 865 MB of memory by disabling non-essential graphical desktop services, a significant gain on devices like the Jetson Orin NX and Nano.

The strategic implication is a significant acceleration in the viability of sophisticated physical AI agents and autonomous robots. By making larger models feasible on edge hardware, NVIDIA is lowering the barrier to entry for advanced AI deployment, fostering innovation in areas from industrial automation to smart infrastructure. However, while these optimizations are crucial, the fundamental constraints of edge computing mean that developers must continuously balance model complexity with hardware limitations. The ongoing challenge will be to push the boundaries of what's possible on-device, driving demand for even more efficient architectures and software stacks to support the next generation of truly intelligent edge applications.

Visual Intelligence

flowchart LR A["Edge AI Challenge"] --> B["Limited Memory"]; B --> C["Inefficient Use"]; C --> D["Bottlenecks"]; A --> E["Optimization Strategies"]; E --> F["Jetson BSP"]; E --> G["Inference Frameworks"]; E --> H["Quantization"]; F --> I["Reclaim Memory"]; I --> J["Enable Complex Workloads"];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

As generative AI models move from data centers to edge devices, efficient memory management becomes critical for deploying complex AI agents and autonomous robots in real-world applications. This guidance from NVIDIA directly addresses a core bottleneck, enabling broader adoption and more sophisticated edge AI capabilities.

Key Details

- Edge devices have strict memory limits, with CPU and GPU sharing resources.

- Memory optimization can improve performance, enable complex workloads, and reduce system costs.

- Strategies cover Jetson BSP, JetPack, inference pipeline, inference frameworks, and quantization.

- Disabling graphical desktop services can reclaim up to 865 MB of memory.

- Optimizations apply to Jetson Orin NX and Jetson Orin Nano.

Optimistic Outlook

By maximizing memory efficiency, developers can deploy larger, more capable AI models on existing edge hardware, accelerating innovation in autonomous systems and physical AI agents. This optimization reduces costs and power consumption, making advanced AI more accessible and sustainable for a wider range of edge applications.

Pessimistic Outlook

Despite optimizations, edge devices inherently face significant memory constraints compared to cloud environments, potentially limiting the ultimate scale and complexity of models that can be deployed. Relying on specific vendor-provided tools and techniques might also create vendor lock-in or require significant effort for developers using alternative hardware or software stacks.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.