NVIDIA nvCOMP Slashes LLM Checkpointing Costs by Optimizing Idle GPU Time

Sonic Intelligence

The Gist

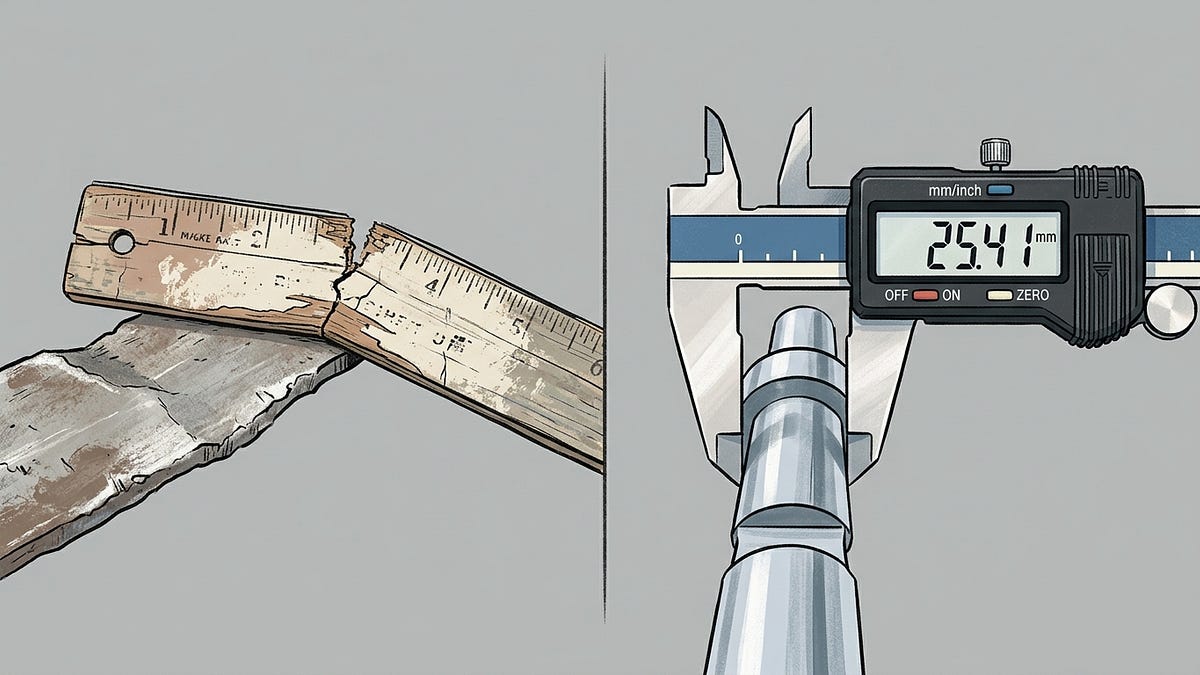

NVIDIA nvCOMP significantly reduces LLM training costs by compressing checkpoints.

Explain Like I'm Five

"Imagine you're building a giant LEGO castle, and every few minutes, you have to stop and take a picture of it to make sure you can rebuild it if it falls over. These pictures take up a lot of space, and while you're taking them, you can't build. This new trick helps you take much smaller pictures very quickly, so you can keep building your castle without wasting time or money."

Deep Intelligence Analysis

The technical context reveals that the optimizer state, particularly AdamW's first and second moment estimates, is four times larger than the model weights, making it the primary contributor to checkpoint size. Meta's Llama 3 training, experiencing 419 interruptions over 54 days, underscores the necessity of frequent checkpointing (every 15-30 minutes), which can generate 1.13 PB of data monthly for a 70B model. The proposed solution leverages lossless compression, implementable with minimal code (around 30 lines of Python), and accelerated by technologies like NVIDIA nvCOMP. This approach not only reduces storage costs by tens of thousands monthly but also mitigates the far greater cost of idle GPUs during synchronous write operations.

The implications for the AI industry are profound. By addressing the hidden costs of checkpointing, organizations can significantly improve the return on investment for their LLM training infrastructure. This efficiency gain could democratize access to large-scale model development, enabling more players to compete in the frontier AI space. Furthermore, reduced cold start times from compressed checkpoints will accelerate iterative development cycles. Asynchronous checkpointing offers a partial solution, but its maturity and memory management challenges mean compression remains a readily deployable, complementary strategy to unlock substantial operational savings and accelerate the pace of AI innovation.

Visual Intelligence

flowchart LR

A[LLM Training] --> B[Frequent Checkpoints]

B --> C[Large Data Size]

C --> D[Idle GPU Time]

D --> E[High Cost]

E --> F[Compression Solution]

F --> G[Reduced Cost]

G --> H[Faster Training]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

High-frequency LLM checkpointing, crucial for fault tolerance, creates substantial hidden costs from idle GPUs and massive storage. Optimizing this process directly impacts the economic viability and scalability of large-scale AI model training, making advanced models more accessible.

Read Full Story on NVIDIA DevKey Details

- ● A 70B parameter LLM checkpoint is 782 GB, with optimizer state comprising 521 GB (4x model weights).

- ● Synchronous checkpointing for a 405B model on 128 NVIDIA DGX B200 GPUs can incur over $200,000/month in idle GPU costs.

- ● A 30-line Python implementation with lossless compression can save $56,000 monthly in storage costs.

- ● Meta reported 419 interruptions over 54 days during Llama 3 training on 16,384 NVIDIA H100 GPUs.

- ● Checkpointing every 30 minutes generates 1.13 PB of data per month for a 70B model.

Optimistic Outlook

Widespread adoption of compression techniques like NVIDIA nvCOMP could drastically lower the operational expenses for LLM training, accelerating research and development. This efficiency gain allows more resources to be allocated to model quality and throughput, fostering innovation across the AI landscape.

Pessimistic Outlook

If these cost inefficiencies are not addressed, the prohibitive expenses of large-scale LLM training could concentrate advanced AI development in the hands of a few well-funded entities. Over-reliance on proprietary solutions might also limit flexibility and open-source contributions in the long term.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Domain-Driven Design Enhances LLM Code Generation by Clarifying Boundaries

Domain-Driven Design (DDD) improves LLM code generation by establishing clear boundaries.

Anthropic's 'Mythos' Model Deemed Too Risky for Public Release, Meta Enters Frontier AI Race

Anthropic's powerful Mythos model is withheld due to exploit capabilities.

Google Gemini Introduces 'Notebooks' for Enhanced Project Organization

Google Gemini launches 'Notebooks' for contextual project organization.

Nyth AI Brings Private, On-Device LLM Inference to iOS and macOS

Nyth AI enables private, on-device LLM inference for Apple devices, prioritizing user data security.

Open-Source AI Assistant 'Clicky' Offers Screen-Aware Interaction for macOS

An open-source AI assistant for macOS offers screen-aware interaction and voice control.

AI Memory Benchmarks Flawed: New Proposal Targets Real-World Agent Competence

Current AI memory benchmarks are critically flawed, hindering agent development.