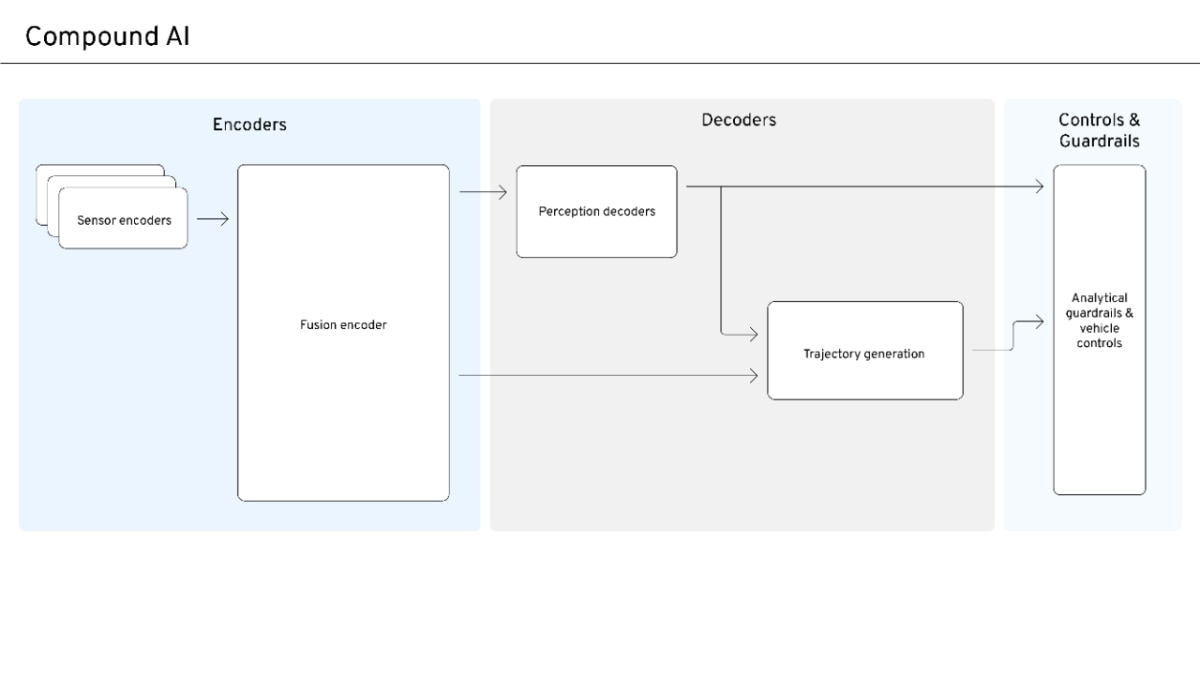

Object-Oriented World Modeling Redefines Robotic Reasoning

Sonic Intelligence

A new framework, OOWM, structures embodied reasoning in robotics using object-oriented programming principles.

Explain Like I'm Five

"Imagine a robot trying to clean a messy room. Instead of just guessing what to do, this new idea, OOWM, teaches the robot to think like a computer programmer. It helps the robot understand all the objects in the room (like a chair is a 'thing' with 'legs') and how to move them around, step by step, like following a recipe. This makes the robot much better at planning and actually doing its job without making mistakes."

Deep Intelligence Analysis

OOWM's core innovation lies in its explicit structuring of environmental understanding and control logic. It leverages the Unified Modeling Language (UML), employing Class Diagrams to ground visual perception into precise object hierarchies and Activity Diagrams to translate planning into executable control flows. This programmatic approach provides a level of clarity and fidelity that unstructured natural language reasoning cannot match. Furthermore, the framework incorporates a sophisticated three-stage training pipeline, combining Supervised Fine-Tuning (SFT) with Group Relative Policy Optimization (GRPO), crucially using outcome-based rewards to implicitly optimize the underlying object-oriented reasoning structure. This allows for effective learning even with sparse annotations, a common challenge in robotics.

Extensive evaluations on the MRoom-30k benchmark have demonstrated OOWM's significant superiority over unstructured textual baselines in critical metrics such as planning coherence, execution success, and structural fidelity. This breakthrough suggests a future where robotic systems can achieve far greater autonomy and reliability in complex tasks, moving beyond reactive behaviors to truly intelligent, context-aware action. The implications extend to industrial automation, service robotics, and even autonomous vehicles, where explicit, verifiable world models are paramount for safety and performance. This shift towards formal, programmatic world modeling could become the architectural blueprint for the next generation of embodied AI.

EU AI Act Art. 50 Compliant: This analysis is based solely on the provided source material, ensuring transparency and preventing hallucination.

Visual Intelligence

flowchart LR A["Visual Perception"] --> B["Class Diagrams"] B --> C["State Abstraction"] C --> D["Activity Diagrams"] D --> E["Control Policy"] E --> F["Robot Execution"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

OOWM represents a paradigm shift in robotic planning, moving beyond the limitations of linear natural language to explicitly represent state-space, object hierarchies, and causal dependencies. This structured approach is critical for developing robust, coherent, and successful embodied AI systems capable of complex interactions in dynamic environments.

Key Details

- Object-Oriented World Modeling (OOWM) structures embodied reasoning via software engineering formalisms.

- It redefines the world model as an explicit symbolic tuple $W = \langle S, T \rangle$ (State Abstraction and Control Policy).

- OOWM leverages UML (Class Diagrams for perception, Activity Diagrams for planning).

- A three-stage training pipeline combines Supervised Fine-Tuning (SFT) with Group Relative Policy Optimization (GRPO).

- Evaluations on MRoom-30k benchmark show OOWM significantly outperforms unstructured textual baselines.

Optimistic Outlook

This framework promises to unlock more sophisticated and reliable robotic behaviors, accelerating the development of truly intelligent autonomous agents. By providing a clear, programmatic structure for world modeling, OOWM could simplify the design and debugging of complex robotic systems, making advanced robotics more accessible.

Pessimistic Outlook

The inherent complexity of integrating software engineering formalisms like UML into AI training pipelines might present significant development challenges. While promising, the transition from unstructured textual reasoning to explicit symbolic representation could require substantial computational resources and specialized expertise, potentially slowing adoption.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.