Optimizing Robotics AI for Embedded Platforms: NXP's VLA Deployment Strategy

Sonic Intelligence

NXP details best practices for deploying Vision-Language-Action models on embedded robotic platforms.

Explain Like I'm Five

"Imagine you have a tiny robot that needs to see, understand, and move its arm to pick up a toy, but it has a very small brain and not much power. This paper is like a guide that shows how to teach this small robot really well, using good videos and smart tricks, so it can move smoothly and quickly without getting stuck, even though it's small."

Deep Intelligence Analysis

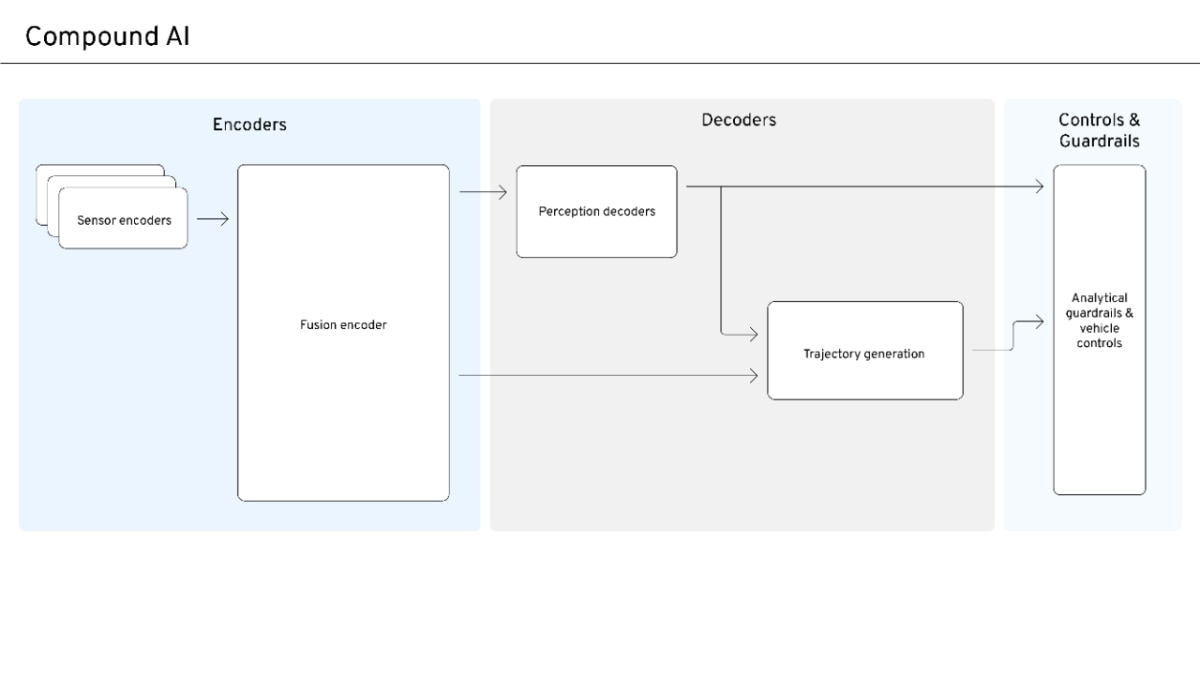

The transition from text-only reasoning to multimodal systems, encompassing visual perception (VLMs) and robot action generation (VLAs), has opened new possibilities for robotic autonomy. However, deploying these models synchronously often leads to inefficiencies, such as oscillatory behavior and delayed corrections, because the robot arm remains idle during inference. The proposed solution involves asynchronous inference, which decouples the generation of commands from their execution, thereby enabling smoother and continuous motion. This approach, however, necessitates that the end-to-end inference latency remains shorter than the action execution duration, imposing a strict upper limit on model throughput.

The article emphasizes that bringing VLA models to embedded platforms is not merely a matter of model compression but a complex systems engineering problem. It requires architectural decomposition, latency-aware scheduling, and hardware-aligned execution. NXP’s guide provides practical best practices, including meticulous dataset recording. Key principles for data collection include consistency (fixed cameras, controlled lighting, strong contrast), fixed calibration, and crucially, avoiding "cheating" by ensuring the model only accesses information available at inference time. The recommendation of a gripper camera, alongside a balanced three-camera setup (Top, Gripper, Left), highlights the importance of optimal viewpoints for precise grasps and overall accuracy, while acknowledging the latency trade-offs. The article also points to the real-time performance achieved by the NXP i.MX95 after optimization, demonstrating the tangible results of these integrated strategies. This holistic approach is essential for translating theoretical advancements in foundation models into deployable, practical robotic systems.

Impact Assessment

Bridging the gap between advanced AI models and resource-constrained embedded robotics is crucial for practical, real-world applications. This work provides actionable strategies to overcome deployment hurdles, enabling more autonomous and responsive robotic systems in diverse industrial and consumer settings.

Key Details

- The article addresses challenges in deploying Vision-Language-Action (VLA) models on embedded robotic platforms.

- Constraints include compute, memory, power limitations, and real-time control requirements.

- Asynchronous inference is proposed to enable smooth, continuous motion by decoupling generation from execution.

- NXP provides best practices for recording reliable robotic datasets and fine-tuning VLA policies (ACT and SmolVLA).

- NXP i.MX95 achieves real-time performance after optimization for VLA models.

- Recommends a gripper camera and a 3-camera setup (Top, Gripper, Left) for optimal accuracy and latency balance.

Optimistic Outlook

Successful implementation of these optimization strategies will unlock the full potential of VLA models for edge robotics, leading to more intelligent, agile, and energy-efficient autonomous systems. This could accelerate innovation in areas like manufacturing automation, logistics, and service robotics, making advanced AI capabilities widely accessible.

Pessimistic Outlook

The complex systems engineering, stringent data quality demands, and hardware-specific optimizations required for embedded VLA deployment may pose significant barriers to entry for many developers. This could limit the widespread adoption of these advanced robotic capabilities, confining them to highly specialized and resource-intensive projects.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.