Off Grid: On-Device AI Web Browsing and Tools, 3x Faster

THE GIST: Off Grid enables on-device AI to use tools like web search and calculators, running 3x faster with configurable KV cache.

AI Cost Observability: The Missing Optimization Layer

THE GIST: AI cost observability is crucial for understanding and optimizing AI spending, which is often opaque.

AIR Blackbox: Open-Source EU AI Act Compliance for AI Agents

THE GIST: AIR Blackbox offers open-source tools for AI agents to comply with the EU AI Act's 2026 deadline.

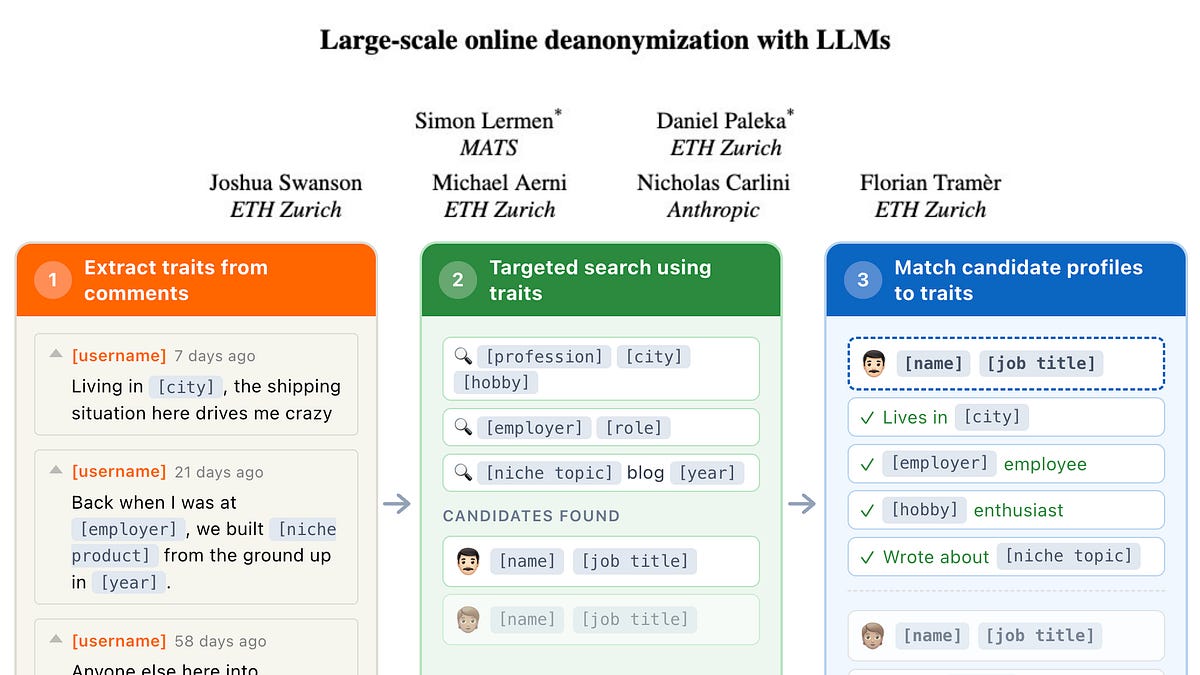

LLMs Enable Large-Scale Online Deanonymization

THE GIST: LLMs can deanonymize users online with high precision across platforms.

Zones of Distrust: Open Security Architecture for Autonomous AI Agents

THE GIST: Zones of Distrust (ZoD) extends Zero Trust principles to autonomous AI agents, focusing on system safety even when agents are compromised.

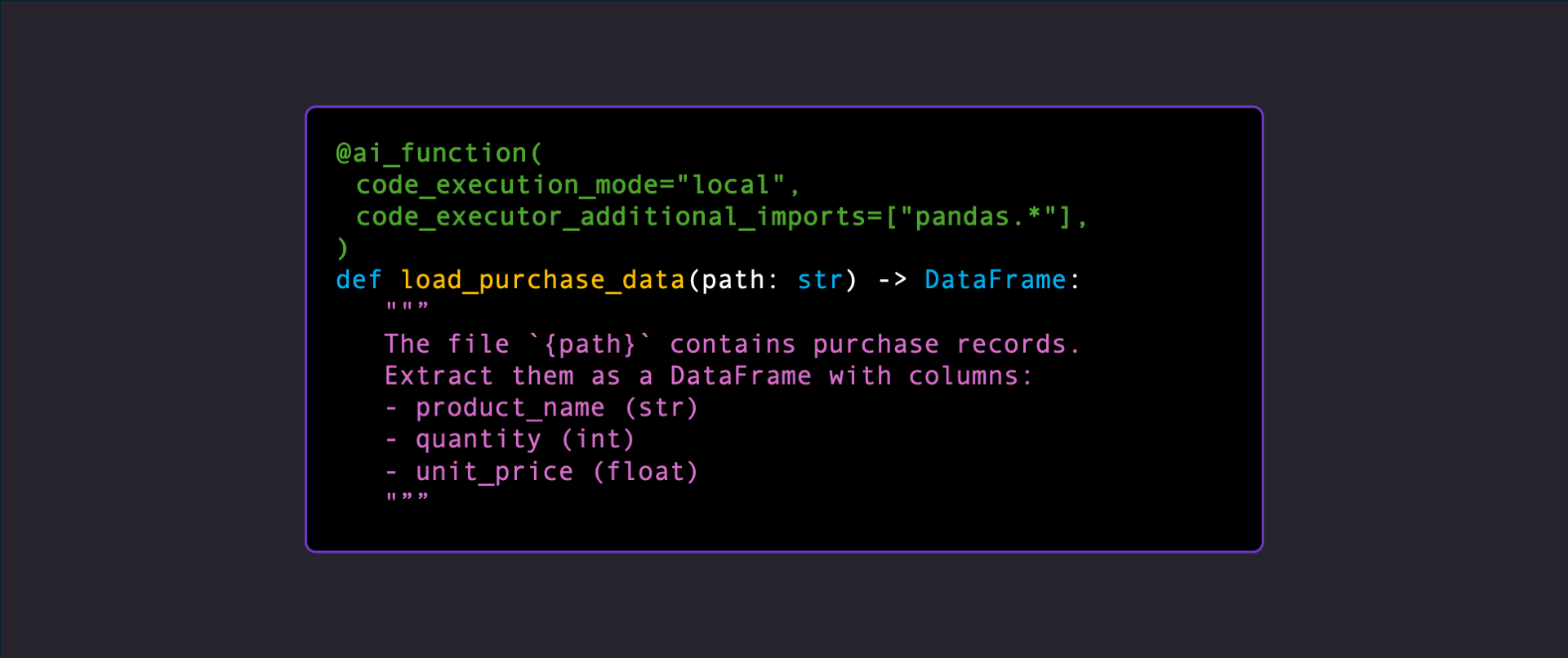

AI Functions: Executing LLM-Generated Code at Runtime

THE GIST: AI Functions execute LLM-generated code at runtime with continuous verification, marking a shift towards AI-driven runtime software development.

New Metrics Quantify AI Agent Reliability Across Key Dimensions

THE GIST: Researchers propose twelve metrics to evaluate AI agent reliability across consistency, robustness, predictability, and safety.

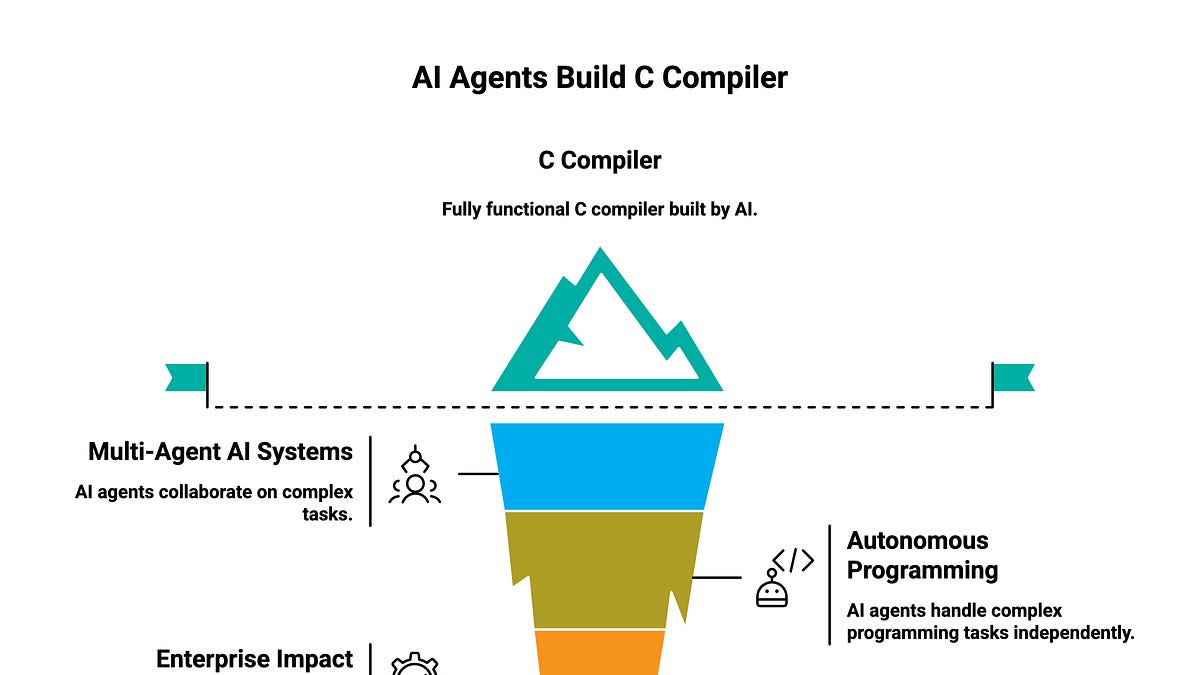

AI Agents Collaborate to Build C Compiler

THE GIST: Sixteen AI agents collaboratively built a C compiler, showcasing the potential of autonomous programming.

Acorn: LLM Framework for Long-Running Agents with Structured I/O

THE GIST: Acorn is a framework for building LLM agents with structured I/O, automatic tool calling, and agentic loops, supporting various LLM providers.