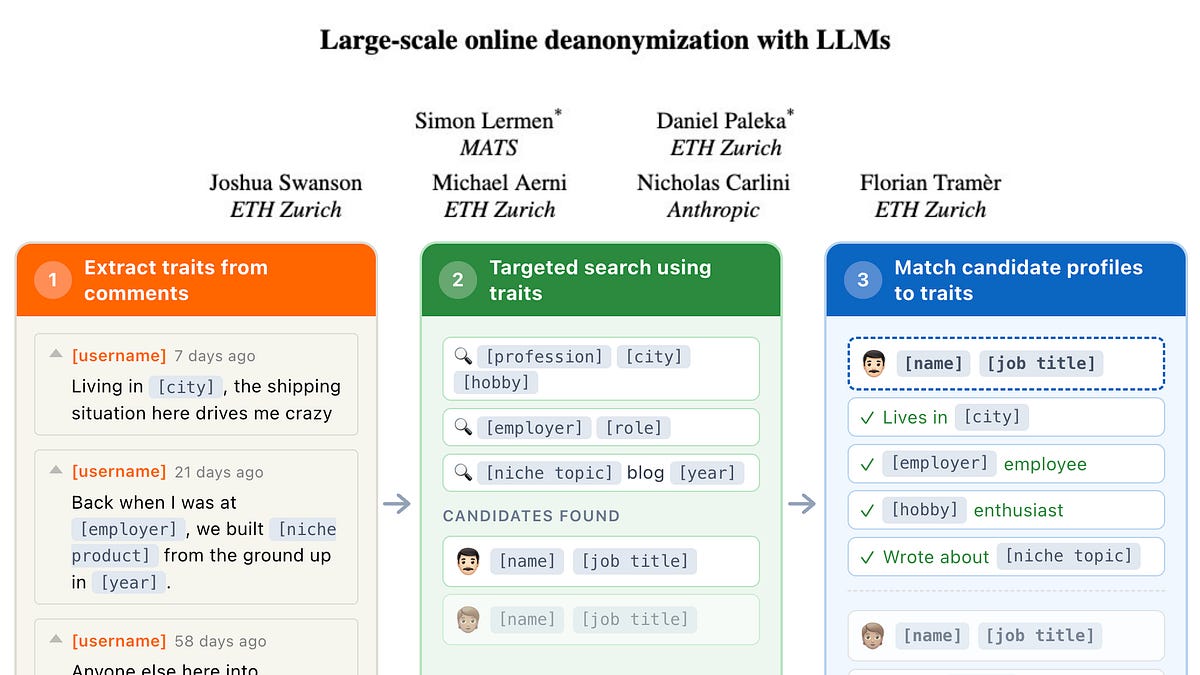

LLMs Enable Large-Scale Online Deanonymization

THE GIST: LLMs can deanonymize users online with high precision across platforms.

Zones of Distrust: Open Security Architecture for Autonomous AI Agents

THE GIST: Zones of Distrust (ZoD) extends Zero Trust principles to autonomous AI agents, focusing on system safety even when agents are compromised.

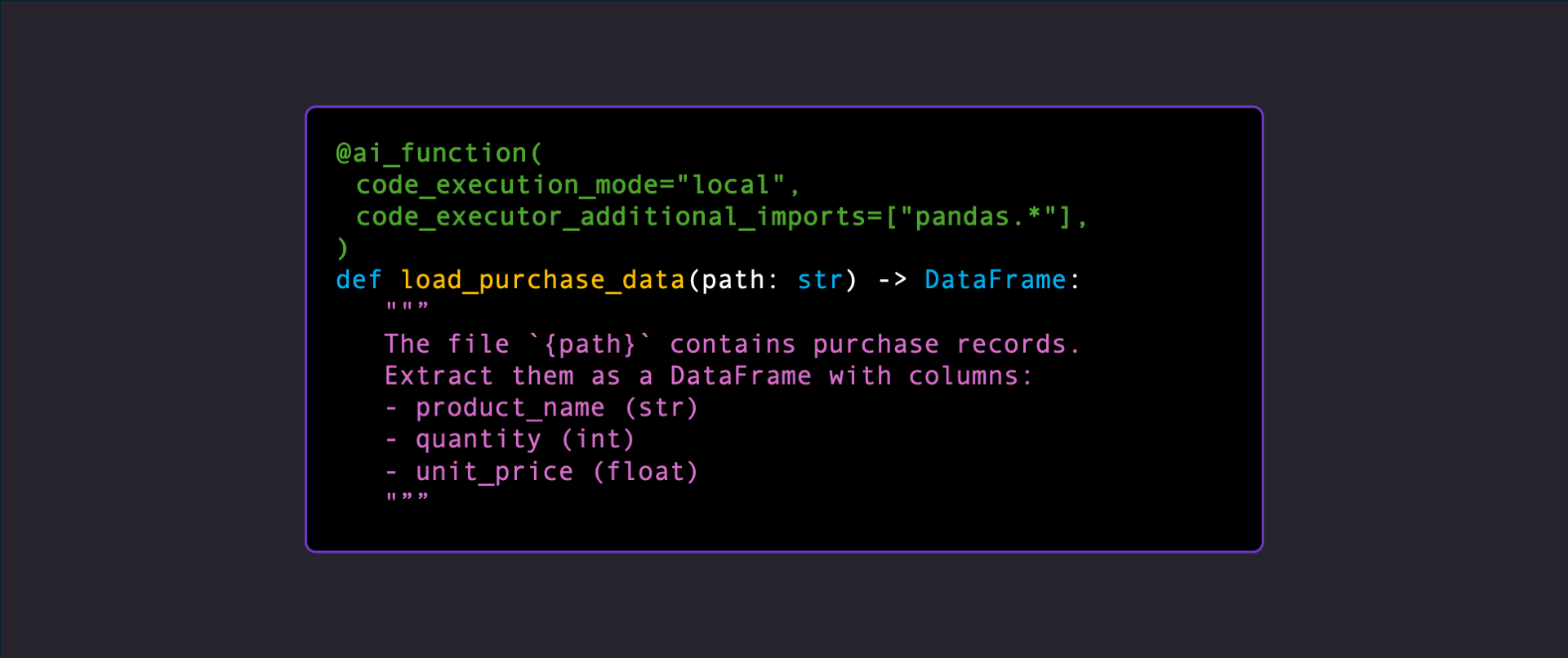

AI Functions: Executing LLM-Generated Code at Runtime

THE GIST: AI Functions execute LLM-generated code at runtime with continuous verification, marking a shift towards AI-driven runtime software development.

New Metrics Quantify AI Agent Reliability Across Key Dimensions

THE GIST: Researchers propose twelve metrics to evaluate AI agent reliability across consistency, robustness, predictability, and safety.

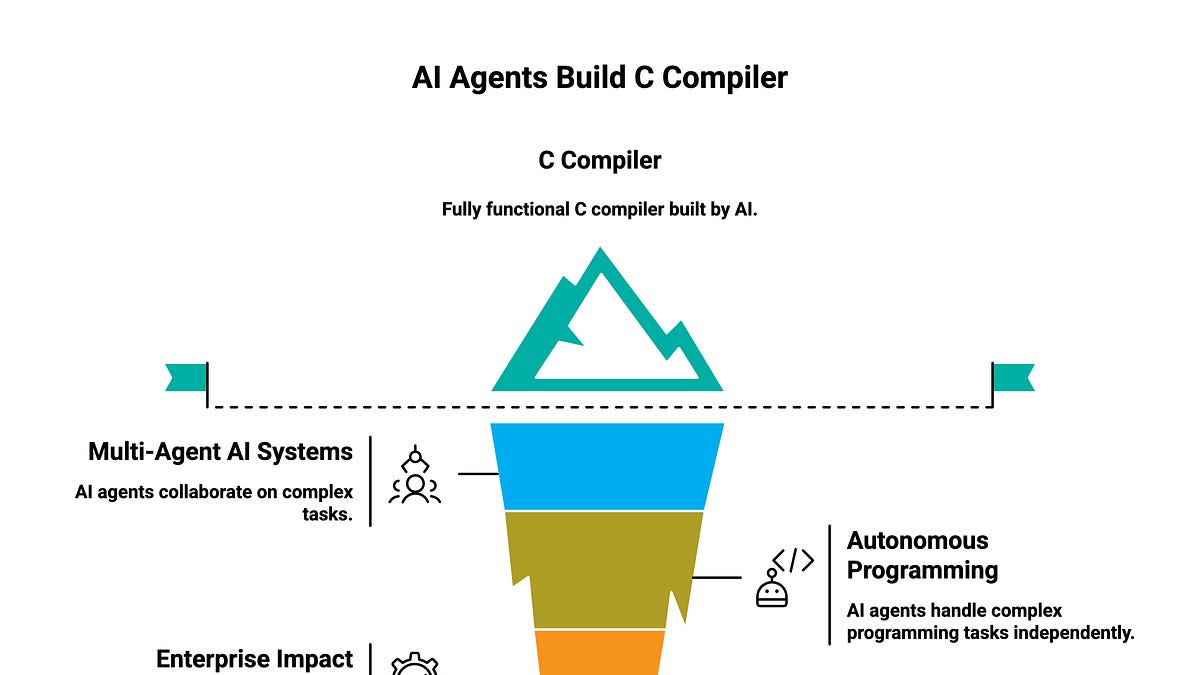

AI Agents Collaborate to Build C Compiler

THE GIST: Sixteen AI agents collaboratively built a C compiler, showcasing the potential of autonomous programming.

Acorn: LLM Framework for Long-Running Agents with Structured I/O

THE GIST: Acorn is a framework for building LLM agents with structured I/O, automatic tool calling, and agentic loops, supporting various LLM providers.

Next-Markdown-mirror: AI-Readable Next.js Pages

THE GIST: Next-Markdown-mirror offers a free, open-source solution to serve clean Markdown to AI agents, reducing token usage and improving response quality.

Taiwan's PSMC Joins Intel and SoftBank in AI Memory Initiative

THE GIST: PSMC partners with Intel and SoftBank to develop Z-Angle Memory (ZAM), an alternative to HBM for AI applications.

ClinTrialFinder: AI-Powered Cancer Clinical Trial Matching

THE GIST: ClinTrialFinder uses AI to analyze and rank cancer clinical trials based on suitability and medical evidence, providing plain-language explanations.