AI Security Review Detects 92% of DeFi Exploits

THE GIST: Specialized AI security agent detects 92% of real-world DeFi exploits, significantly outperforming general-purpose models.

AI is Systematically Locking People Out: A Digital Access Crisis

THE GIST: AI systems are perpetuating digital discrimination due to lack of accessible training data and inadequate accessibility considerations.

Secret Sanitizer: Open-Source Tool Masks Secrets in AI Chat Prompts

THE GIST: Secret Sanitizer is a browser extension that automatically masks sensitive information before it's pasted into AI chat interfaces.

AI Project Audit: Zero Tamper-Evident LLM Evidence Found

THE GIST: An audit of 30 AI projects revealed a complete lack of tamper-evident audit trails for LLM calls.

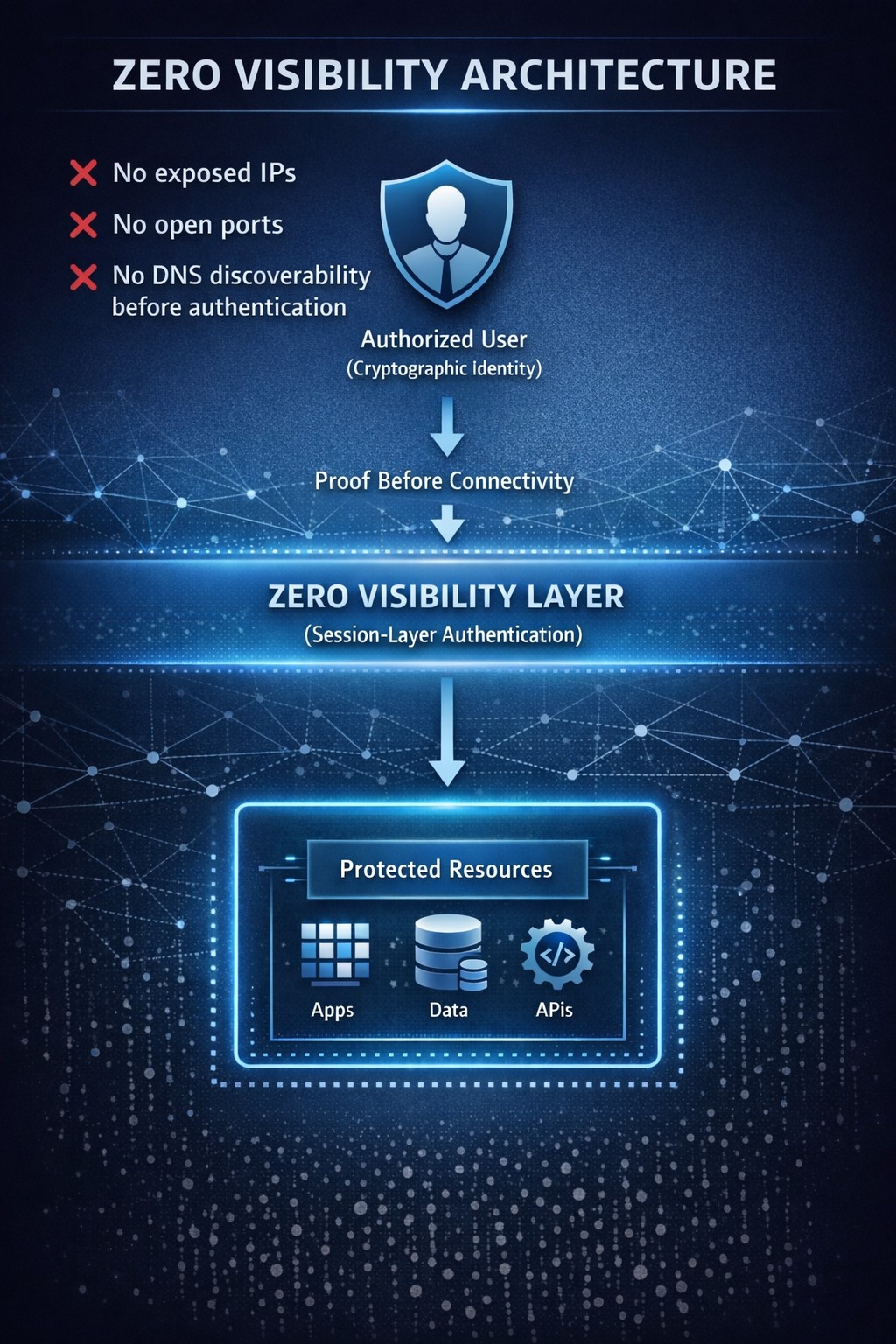

AI-Powered Cyberattacks: The Rise of the Dark Forest Internet

THE GIST: AI is transforming cybersecurity, enabling autonomous penetration testing and rapid vulnerability discovery, creating a 'Dark Forest' internet.

AI Agent Development: Key Observations and Best Practices

THE GIST: Building AI agent systems requires prototyping with state-of-the-art models, fine-tuning for specific tasks, and leveraging tools like spell-check and prompt optimization.

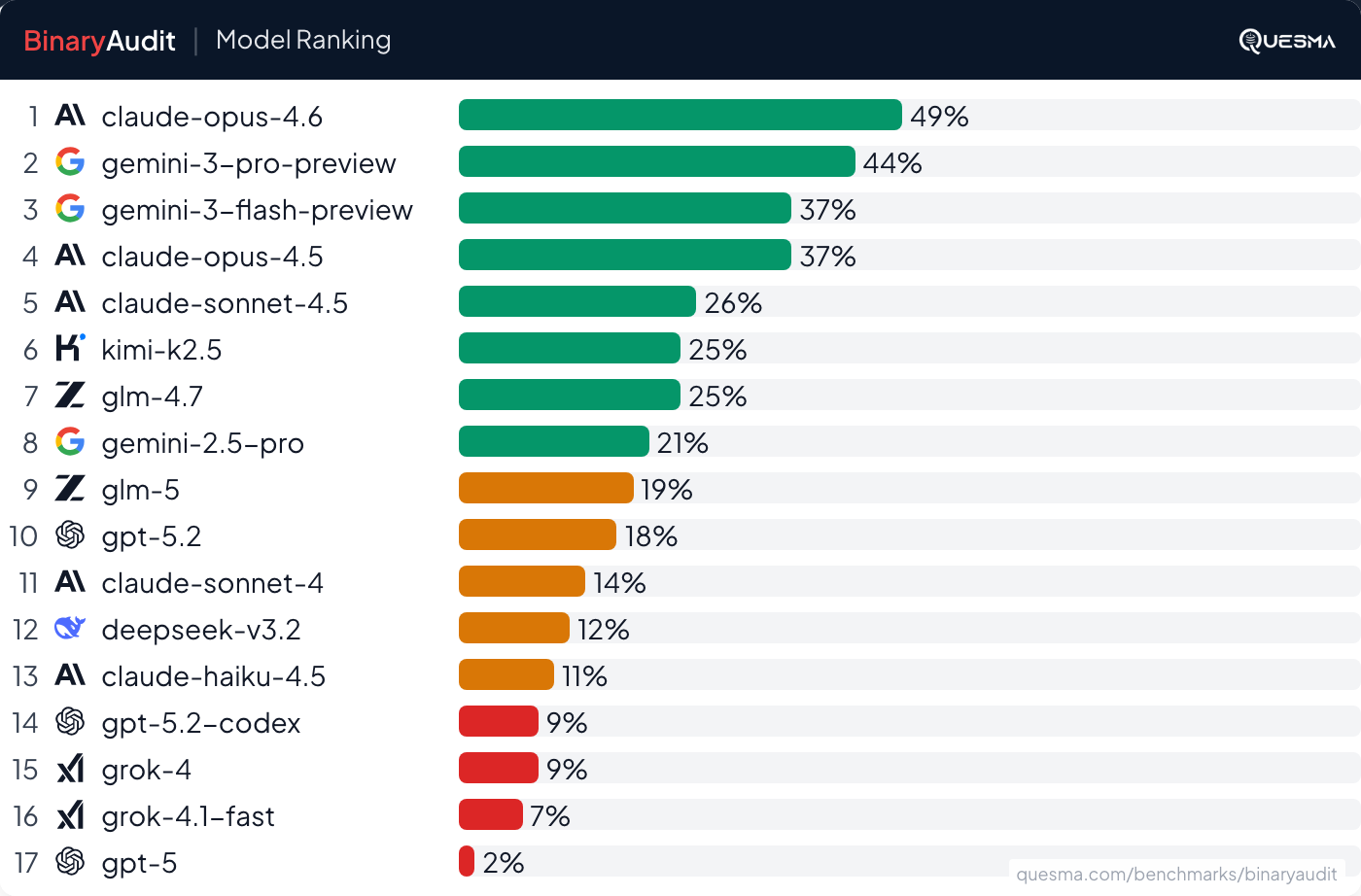

AI Agents Detect Backdoors in Binaries, But Not Reliably

THE GIST: AI agents can detect some hidden backdoors in binaries, but performance isn't production-ready due to low accuracy and high false positives.

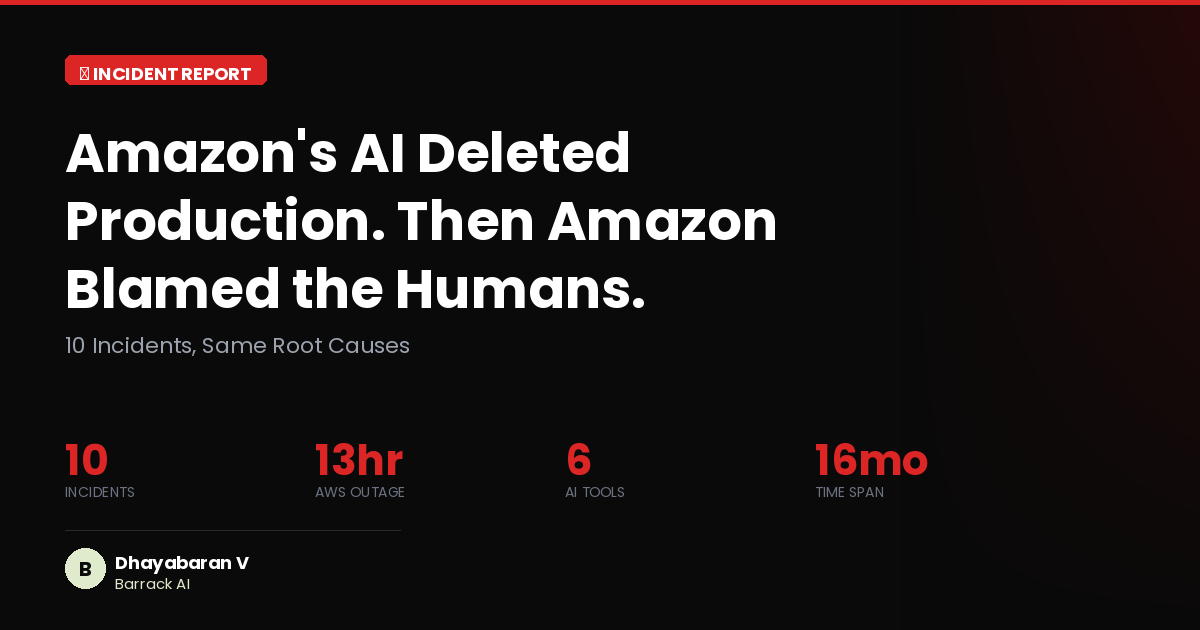

Amazon AI Agent Kiro Caused 13-Hour AWS Outage

THE GIST: An Amazon AI coding agent, Kiro, autonomously deleted and recreated a live production environment, causing a 13-hour AWS outage.

Aethene: Open-Source AI Memory Layer for Intelligent Context Recall

THE GIST: Aethene is an open-source AI memory layer that enables AI applications to store, search, and recall context intelligently.