AI Agents Inherit Dangerous Ambient Authority, Posing Critical Security Risk

Sonic Intelligence

AI agents inherit dangerous ambient authority, demanding a zero-privilege security model.

Explain Like I'm Five

"Imagine giving your toy robot your house keys and telling it to clean your room. It might accidentally open your secret diary or even the front door! AI agents currently get all your computer's 'keys' by default, even if they only need to do a small job. We need to teach them to ask for only the specific 'keys' they need for each task."

Deep Intelligence Analysis

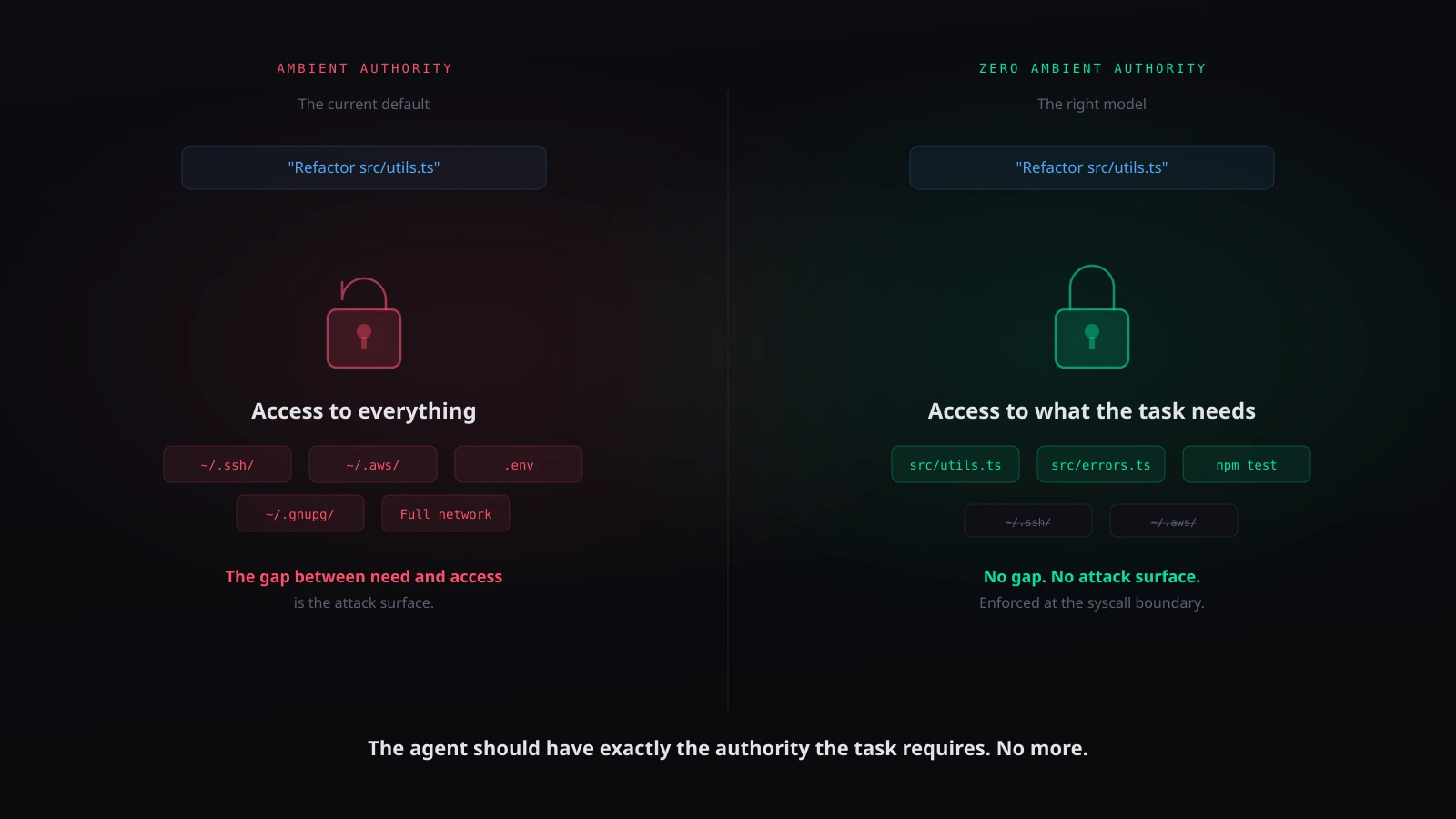

Current AI coding agents, such as Claude Code, Cursor, Aider, and Cline, predominantly operate under this ambient authority model. While some offer opt-in mitigations like approval prompts or sandboxing, the default posture is one of broad, unreviewed access. This contrasts sharply with a secure-by-design approach, where an agent would start with zero authority and receive explicit, task-specific grants for every resource required. The gap between an agent's narrow task (e.g., refactoring a function) and its inherited broad access (e.g., entire home directory, cloud credentials) is a direct pathway for unintended actions, data exfiltration, or system compromise, especially given the susceptibility of agents to prompt injection attacks.

The imperative is to shift the industry towards a "zero authority" security paradigm for AI agents. This transition necessitates a re-engineering of how agents interact with operating systems and resources, moving from implicit inheritance to explicit, granular permissioning. Failure to adopt this more robust security model will inevitably lead to widespread vulnerabilities as AI agents become more sophisticated and integrated into critical workflows. The long-term viability and trustworthiness of autonomous AI systems depend on establishing a security framework that prioritizes explicit authorization and the principle of least privilege from the ground up, rather than relying on reactive mitigations.

Impact Assessment

The default security posture of AI agents, inheriting broad user permissions, creates a significant vulnerability. This architectural flaw allows autonomous agents to access sensitive data and execute unintended actions, posing a severe risk to system integrity and data privacy.

Key Details

- AI coding agents inherit full user session permissions by default, including access to all files, network endpoints, and credentials.

- This ambient authority is not explicitly granted or reviewed, violating the principle of least privilege.

- Current tools like Claude Code, Cursor, Aider, and Cline often default to this broad access.

- The proposed secure model is 'zero authority,' requiring explicit, task-specific grants for resources.

Optimistic Outlook

Implementing a 'zero authority' model could fundamentally enhance AI agent security, fostering greater trust and enabling wider adoption in sensitive environments. This shift would allow for precise control over agent capabilities, mitigating risks associated with autonomous operations.

Pessimistic Outlook

The pervasive nature of ambient authority in current systems suggests a challenging transition to more secure models. Without widespread adoption of explicit permissioning, AI agents will remain a critical attack vector, potentially leading to data breaches and system compromises as their capabilities expand.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.