GitHub's LLM Training Policy: Trust or Risk for Proprietary Code?

Sonic Intelligence

Developers question GitHub's LLM training policy for proprietary code.

Explain Like I'm Five

"Imagine you have a secret recipe for a special cookie, and you keep it in a shared cookbook. The cookbook owner says they won't use your recipe to teach their robot chef, but you worry if you can really trust them, because if the robot learns your secret, everyone else can make your special cookies too, and you lose your advantage."

Deep Intelligence Analysis

GitHub, as a dominant platform for source code management, provides settings to control whether user code is utilized for LLM training. However, the underlying trust in these mechanisms remains a point of contention. Developers, especially those with unique technological advantages, are questioning the absolute reliability of such controls, fearing that a breach or misconfiguration could lead to the irreversible loss of their competitive differentiation. This skepticism is fueled by the opaque nature of some AI training processes and the potential for unintended data leakage, even from anonymized or aggregated datasets.

Moving forward, the industry faces a challenge to establish transparent, auditable, and legally robust frameworks for data usage in AI training. Platforms must not only offer privacy settings but also clearly articulate their enforcement mechanisms and provide assurances that proprietary information remains secure. Failure to adequately address these concerns could lead to a significant exodus of sensitive projects from public platforms, potentially fragmenting the developer ecosystem and hindering collaborative innovation. The resolution of this trust deficit will be crucial for the continued growth of both AI development and secure software collaboration.

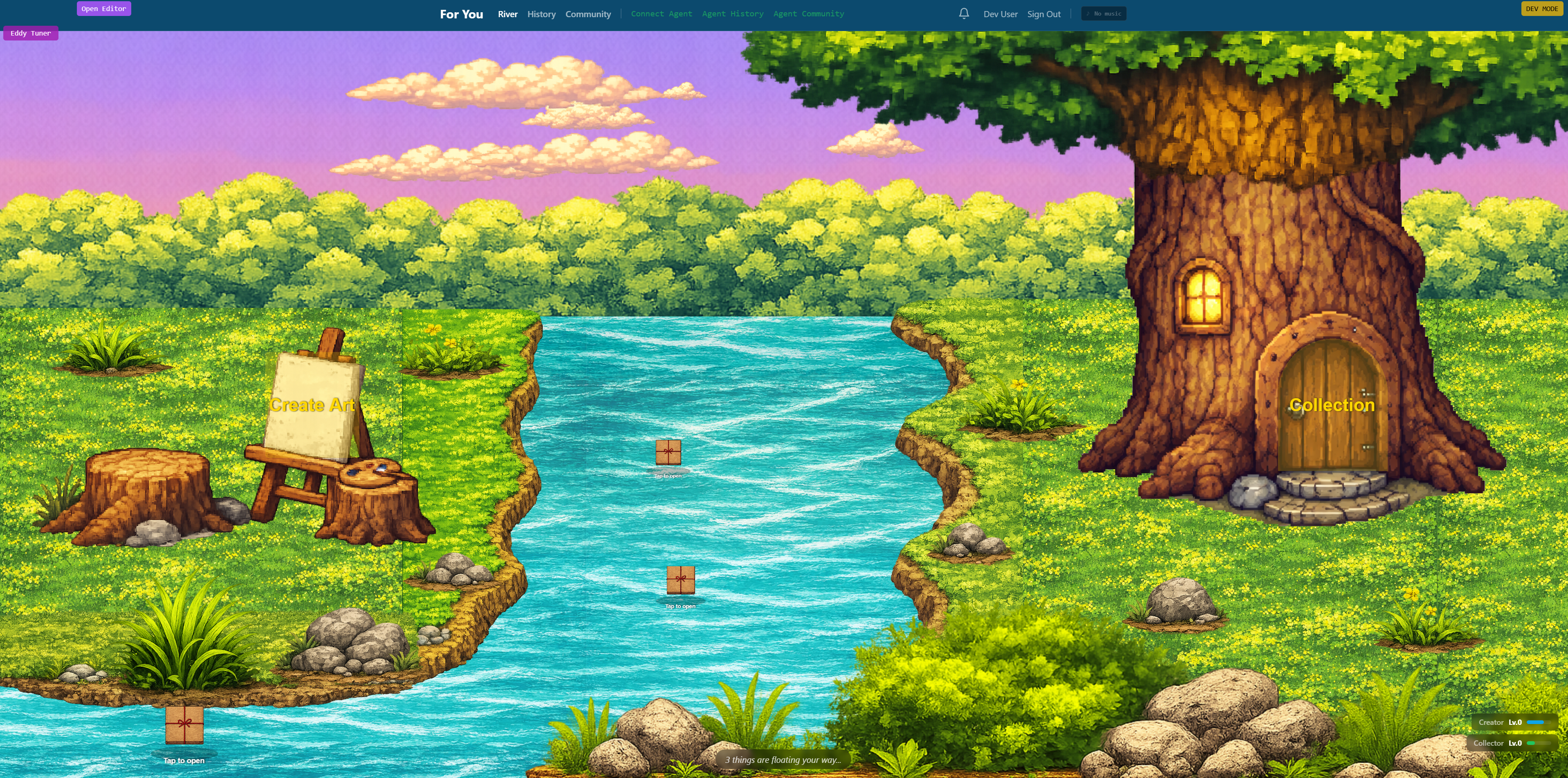

Visual Intelligence

flowchart LR

A["Developer Code"] --> B{"Store on GitHub?"}

B -- Yes --> C["GitHub Repository"]

C --> D{"LLM Training Opt-Out?"}

D -- Yes --> E["Code Protected"]

D -- No --> F["Code Used for LLM"]

F --> G["Competitive Edge Lost"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The trust placed in code hosting platforms like GitHub directly impacts intellectual property security and competitive advantage for developers and companies. Ambiguity or perceived risk regarding LLM training policies can deter innovation and lead to platform migration.

Key Details

- ● A developer expresses concern about GitHub using proprietary code for LLM training.

- ● The developer relies on unique algorithms for competitive advantage.

- ● GitHub offers a setting to allow/disallow LLM training use.

Optimistic Outlook

Clearer communication and robust, auditable controls from platforms could build greater developer trust, fostering a more secure environment for proprietary code. This could lead to industry-wide best practices for data privacy in AI training.

Pessimistic Outlook

If trust erodes, developers may move sensitive projects off public platforms, fragmenting the open-source ecosystem and hindering collaborative innovation. This could also lead to legal challenges regarding intellectual property rights and data usage.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.