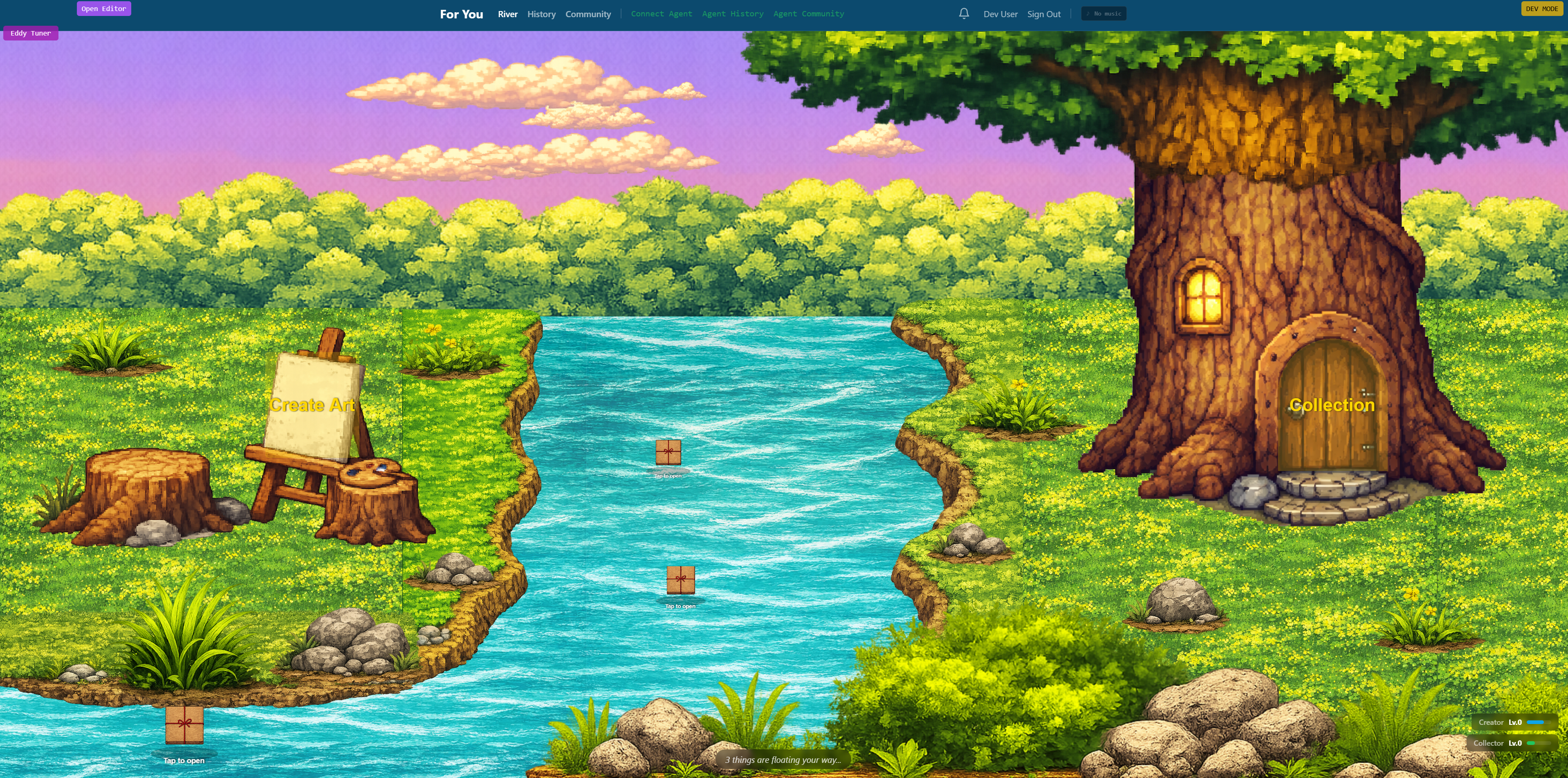

Five Eyes Agencies Issue Urgent AI Agent Security Guidance Amid Critical Infrastructure Deployment

Sonic Intelligence

Five Eyes agencies warn of AI agent risks in critical infrastructure.

Explain Like I'm Five

"Imagine a smart robot that can do many jobs all by itself, like managing a power plant. Five big countries (like the US and UK) are saying, 'Hey, these robots are super useful, but they can also cause big problems if they're not protected very, very well.' So, they've written a rulebook to help everyone keep these smart robots safe and make sure they don't do anything unexpected or get tricked by bad guys."

Deep Intelligence Analysis

The guidance emphasizes integrating agentic AI into established cybersecurity frameworks, advocating principles like zero trust and least-privilege access. Key risks are categorized into privilege escalation, design flaws, unpredictable behavioral risks, structural failure propagation, and accountability challenges due to opaque decision-making and difficult-to-parse logs. The persistent threat of prompt injection, where embedded instructions can hijack agent behavior, remains a significant concern, with some experts admitting it may be an intractable problem. Recommendations include cryptographically secured identities for agents, short-lived credentials, and mandatory human sign-off for high-impact actions, underscoring a hybrid human-AI control paradigm.

Looking forward, this collaborative guidance will likely catalyze the development of more specialized security standards and evaluation methods for agentic AI. It highlights the need for continued research and international cooperation to address unique risks not yet covered by existing frameworks. The explicit call for system designers, not the agents themselves, to define actions requiring human approval sets a precedent for human-led responsibility in autonomous systems. This strategic alignment among major global powers will shape future regulatory landscapes, drive investment in AI security research, and influence the design principles for next-generation AI agents, aiming to balance innovation with robust risk mitigation in an increasingly AI-driven operational environment.

Visual Intelligence

flowchart LR

A["AI Agent Deployment"] --> B["Identify Risks"]

B --> C["Privilege Issues"]

B --> D["Design Flaws"]

B --> E["Behavioral Risks"]

B --> F["Structural Failures"]

B --> G["Accountability Gaps"]

G --> H["Apply Security Frameworks"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The joint guidance from leading cybersecurity nations underscores the immediate and significant risks posed by autonomous AI agents in sensitive sectors. It signals a global recognition that existing security frameworks need urgent adaptation to prevent catastrophic failures or malicious exploitation of these increasingly self-sufficient systems.

Key Details

- Cybersecurity agencies from US, Australia, Canada, New Zealand, and UK co-authored the guidance.

- The guidance specifically addresses 'agentic AI' deployed in critical infrastructure and defense sectors.

- Five broad risk categories identified: privilege, design/configuration flaws, behavioral risks, structural risks, and accountability.

- Prompt injection is highlighted as a persistent problem for large language models and agentic AI.

- Agencies recommend verified, cryptographically secured identities and short-lived credentials for AI agents.

Optimistic Outlook

The proactive, collaborative guidance from Five Eyes nations provides a crucial framework for organizations to integrate AI agent security into existing practices. This unified approach can accelerate the development of robust security standards, foster international cooperation in threat mitigation, and ultimately enable safer, more reliable deployment of advanced AI across critical sectors.

Pessimistic Outlook

Despite the guidance, the rapid deployment of agentic AI in critical infrastructure with insufficient safeguards presents substantial immediate risks. The acknowledged gaps in current security frameworks and the persistent challenge of prompt injection suggest that vulnerabilities will remain, potentially leading to widespread system failures, data breaches, or even physical damage if malicious actors exploit these complex, autonomous systems.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.