AlphaCNOT: Quantum Gate Optimization with Model-Based Reinforcement Learning

Sonic Intelligence

The Gist

AlphaCNOT reduces quantum CNOT gate counts by up to 32% using model-based RL.

Explain Like I'm Five

"Imagine you have a special computer that uses tiny quantum bits, and to make it work, you need to do a lot of specific steps called "gates." One important step is the CNOT gate. This new program, AlphaCNOT, is like a smart helper that figures out how to do these CNOT steps using much fewer moves, making the quantum computer work better and faster, like finding a shortcut in a maze."

Deep Intelligence Analysis

AlphaCNOT's performance metrics underscore its potential impact. It achieves up to a 32% reduction in CNOT gate count compared to the established Patel-Markov-Hayes (PMH) baseline for linear reversible synthesis. Furthermore, it demonstrates consistent gate count reduction across various topologies involving up to 8 qubits, outperforming state-of-the-art RL-based solutions in constrained scenarios. This model-based strategy, which evaluates future trajectories, offers a distinct advantage over purely heuristic or reactive RL methods, providing a pathway to more optimized quantum programs.

The implications for quantum computing are substantial. Improved circuit optimization directly translates to enhanced quantum device performance and reduced error rates, accelerating the transition towards a "quantum utility" era where practical quantum applications become feasible. The methodology's generalizability to other circuit optimization tasks, such as Clifford minimization, suggests a broader paradigm shift in how quantum algorithms are designed and executed, potentially unlocking new frontiers in materials science, drug discovery, and complex system simulations. However, the scalability of these gains to larger qubit counts and more complex architectures remains a critical area for future research and validation.

Visual Intelligence

flowchart LR A["Quantum Circuit"] --> B["CNOT Minimization"]; B --> C["AlphaCNOT RL MCTS"]; C --> D["Optimized CNOT Sequence"]; D --> E["Reduced Gate Count"]; C -- "Compared to" --> F["PMH Baseline"]; E --> G["Quantum Utility Era"];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

Quantum circuit optimization is critical for current noisy quantum devices, where error propagation scales with operation count. Reducing CNOT gates directly improves quantum program efficiency and reliability, accelerating the transition to practical quantum computing.

Read Full Story on ArXiv cs.AIKey Details

- ● AlphaCNOT is a Reinforcement Learning framework utilizing Monte Carlo Tree Search (MCTS).

- ● It achieves up to a 32% reduction in CNOT gate count compared to the PMH baseline for linear reversible synthesis.

- ● The method reports consistent gate count reduction across various topologies with up to 8 qubits.

- ● AlphaCNOT is model-based, leveraging lookahead search to identify efficient CNOT sequences.

Optimistic Outlook

This method's significant gate count reduction promises more robust and complex quantum algorithms, potentially unlocking new applications in fields like materials science and drug discovery. The combination of RL with search-based strategies could generalize to other optimization tasks, fostering a "quantum utility" era.

Pessimistic Outlook

While promising, the current results are demonstrated on up to 8 qubits, which is still a relatively small scale. Scaling this approach to larger, more complex quantum systems without prohibitive computational cost remains a significant challenge, potentially limiting immediate real-world impact.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Safety Shields Enable AI for Critical Power Grids

New AI framework ensures safety for power grid operations.

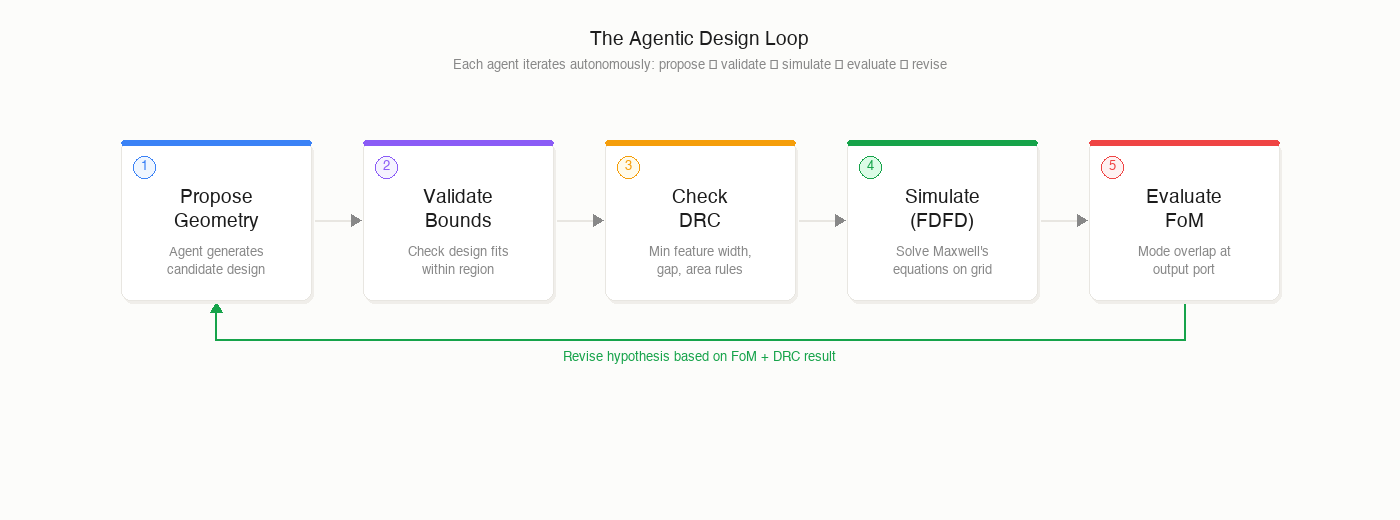

AI Agents Autonomously Design Photonic Chips, Revolutionizing Optical Computing

AI agents successfully designed photonic components autonomously, meeting performance and fabrication criteria.

AI Revolutionizes Academic Peer Review at Scale

AI reviews outperform humans in large-scale academic pilot.

Knowledge Density, Not Task Format, Drives MLLM Scaling

Knowledge density, not task diversity, is key to MLLM scaling.

Lossless Prompt Compression Reduces LLM Costs by Up to 80%

Dictionary-encoding enables lossless prompt compression, reducing LLM costs by up to 80% without fine-tuning.

Weight Patching Advances Mechanistic Interpretability in LLMs

Weight Patching localizes LLM capabilities to specific parameters.