Autonomous AI Agents Spearhead Offensive Cyber Operations, Outpacing Human Pentesters

Sonic Intelligence

The Gist

Autonomous AI agents now lead offensive cyber operations, outpacing human capabilities.

Explain Like I'm Five

"Imagine bad guys now have super-smart robot hackers that can find weaknesses and break into computer systems all by themselves, much faster and better than human hackers. This means good guys need super-smart robot defenders too, just to keep up."

Deep Intelligence Analysis

This acceleration is underpinned by three key technical advancements: extended reasoning, which allows AI models to maintain context across entire attack chains; sophisticated tool integration, enabling direct manipulation of security frameworks; and multi-modal capabilities, which enhance reconnaissance and analysis. These developments have propelled AI's success rate in security challenges, exemplified by Claude Sonnet 4.5's dramatic performance increase. The implication is that the primary constraint on security validation is no longer human expertise but computational power, demanding a paradigm shift in how organizations approach their security posture.

The strategic implications are profound. The current arms race in cybersecurity is no longer between human attackers and human defenders, but between increasingly sophisticated AI systems. This necessitates a proactive adoption of AI-driven defensive measures, such as those offered by Armadin, which deploy swarms of specialized AI agents for continuous, autonomous attack surface probing. Organizations that fail to transition to AI-powered, continuous security validation will find their human-scale detection capabilities rendered obsolete, facing an asymmetric threat where the speed and autonomy of attacks far outstrip their defensive capacity. The future of cybersecurity will be defined by the efficacy of AI versus AI.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Visual Intelligence

flowchart LR

A[AI Assistive Role] --> B[Human-Driven Attacks]

C[AI Autonomous Agents] --> D[Reconnaissance]

D --> E[Privilege Escalation]

E --> F[Data Exfiltration]

F --> G[Adaptive Evasion]

C --> G

H[New Security Model]

G --> H

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The performance gap between AI and human operators in offensive security has not only closed but reversed, marking a critical inflection point. This shift demands a fundamental re-evaluation of cybersecurity strategies, as traditional human-led defenses are increasingly unable to keep pace with autonomous, machine-speed threats, necessitating AI-driven defensive counterparts.

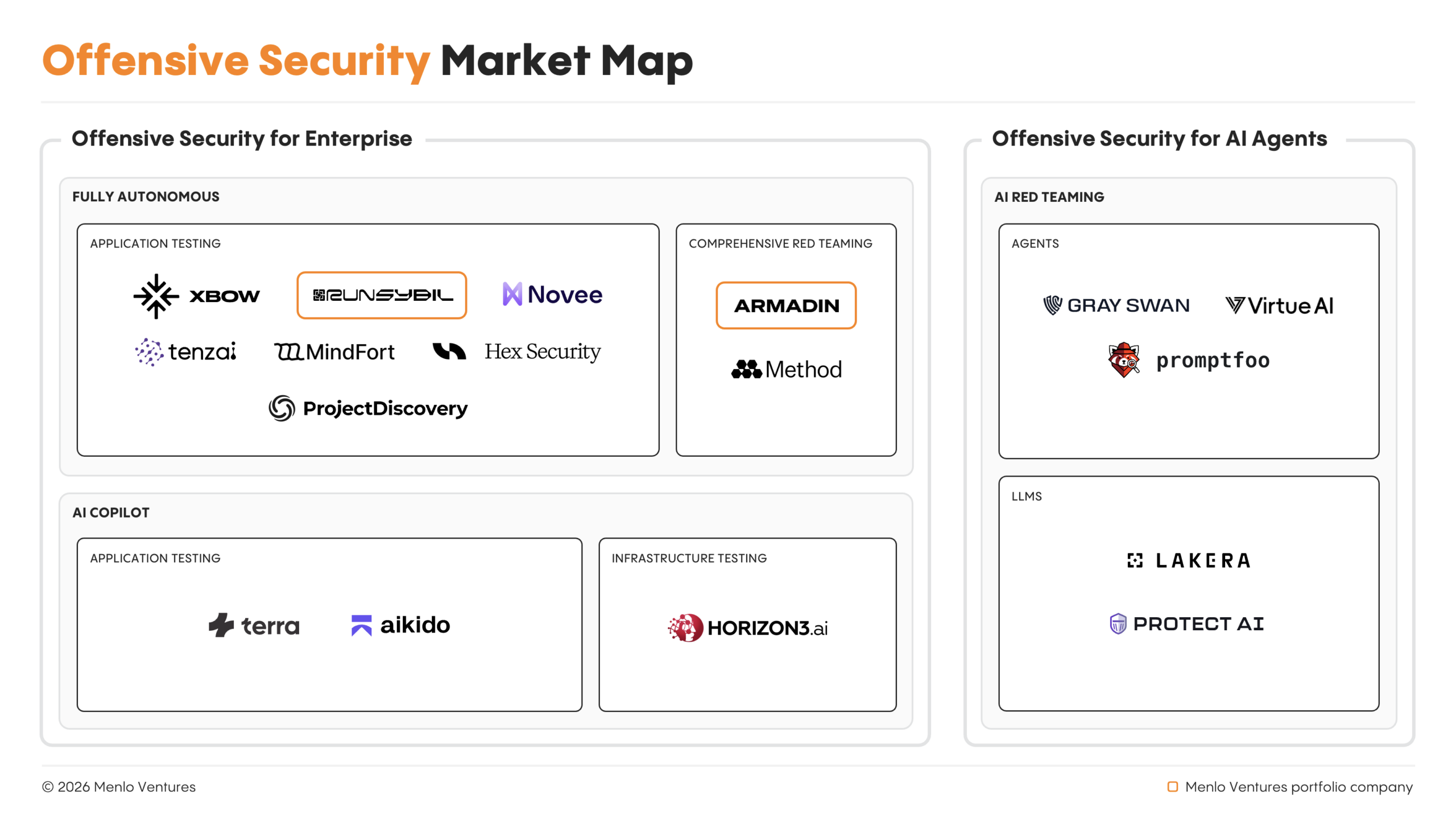

Read Full Story on MenlovcKey Details

- ● Autonomous cyberattacks have transitioned from theoretical to operational in recent months.

- ● Anthropic documented a '90% autonomous' cyber espionage campaign in November.

- ● AI-generated phishing outperformed human red teams by 24% by March 2025, a 42-percentage-point swing in two years.

- ● Claude Sonnet 4.5 doubled its success rate from 38% to 76.5% in six months for security challenges.

- ● Key technical enablers include extended reasoning, advanced tool integration, and multi-modal capabilities.

Optimistic Outlook

The rapid advancement of offensive AI could catalyze an equally rapid evolution in defensive AI, fostering an accelerated cybersecurity arms race that ultimately strengthens global digital resilience. By forcing organizations to adopt continuous, AI-powered threat validation, this shift could lead to more robust and adaptive security postures across the board.

Pessimistic Outlook

The emergence of autonomous offensive AI, capable of operating at machine speed and adapting to defenses, risks rendering traditional human-led security operations obsolete. This creates an asymmetric threat landscape where defenders struggle to keep pace with evolving, self-adapting attacks, potentially leading to increased breach frequency and severity across critical infrastructure.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Miasma: The Open-Source Tool Poisoning AI Training Data Scrapers

Miasma offers an open-source defense against AI data scrapers by feeding them poisoned content.

AI Agents Get Self-Sovereign Identity with Notme.bot OSS Spec

Notme.bot introduces an open-source spec for secure AI agent identity.

AI Coding Tools Introduce Systemic Security Vulnerabilities

AI coding assistants are introducing significant security vulnerabilities into software development.

AI System Authors Peer-Reviewed Scientific Paper

An AI system independently authored a scientific paper that passed peer review.

Wikipedia Bans AI-Generated Content Amidst Hallucination Concerns

Wikipedia bans AI-generated content, citing accuracy and integrity concerns.

xAI Co-Founders Depart Amidst Company Restructuring

All xAI co-founders have reportedly departed as Musk plans a rebuild.