AI System Authors Peer-Reviewed Scientific Paper

Sonic Intelligence

The Gist

An AI system independently authored a scientific paper that passed peer review.

Explain Like I'm Five

"Imagine a robot that can read lots of books, think of new ideas for experiments, do the experiments, and then write a report about them, just like a real scientist. One of its reports even got approved by other scientists!"

Deep Intelligence Analysis

The AI Scientist operates through a modular pipeline, initially prompted with a general topic, then surveying literature, generating novel hypotheses, and subsequently planning and executing experiments. It leverages existing foundation models, such as Anthropic’s Claude Sonnet or OpenAI’s GPT-4o, for its cognitive functions, with the team's innovation lying in the orchestration of these models into a coherent scientific workflow. The system even incorporates an internal peer review mechanism to self-correct. The acceptance of one of its three submitted papers to a workshop at the 2025 International Conference on Learning Representations (ICLR) underscores its capability to meet a baseline of academic rigor, even if the bar for that specific workshop was acknowledged to be lower than a main conference publication. Issues like hallucinated references and duplicated figures highlight current limitations in execution and methodological precision.

The implications of autonomous AI scientists are profound, extending beyond mere efficiency gains. This development could dramatically accelerate the pace of scientific progress by enabling parallel, high-throughput research across numerous domains, potentially leading to breakthroughs in areas currently limited by human cognitive capacity and time. However, it also introduces critical questions regarding intellectual property, accountability for errors, and the potential for a deluge of AI-generated content that could strain peer review systems and dilute the overall quality of published research. The scientific community must now grapple with establishing new frameworks for collaboration, validation, and ethical oversight as AI transitions from a powerful instrument to an independent participant in the pursuit of knowledge.

This analysis was generated by an AI model based on the provided source material.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Visual Intelligence

flowchart LR A["General Topic Prompt"] --> B["Survey Literature"] B --> C["Generate Hypotheses"] C --> D["Evaluate Ideas"] D --> E["Plan Experiments"] E --> F["Execute Experiments"] F --> G["Analyze Data"] G --> H["Write Paper"] H --> I["Internal Peer Review"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This marks a significant milestone where AI moves beyond assistance to autonomous scientific inquiry, challenging traditional human-centric models of research and discovery. It opens new avenues for accelerating scientific progress but also raises questions about rigor and intellectual property.

Read Full Story on ScientificamericanKey Details

- ● The "AI Scientist" system was developed by Jeff Clune and colleagues.

- ● It wrote a paper without human involvement.

- ● The paper passed peer review for a workshop at the 2025 International Conference on Learning Representations (ICLR).

- ● The system uses existing foundation models like Anthropic’s Claude Sonnet or OpenAI’s GPT-4o.

- ● One of three AI-generated papers submitted to the ICBINB workshop at 2025 ICLR was accepted.

Optimistic Outlook

Autonomous AI scientists could dramatically accelerate the pace of discovery by generating and testing hypotheses at scale, freeing human researchers for higher-level conceptual work. This could lead to breakthroughs in complex fields like medicine or materials science, tackling problems too vast for human teams alone.

Pessimistic Outlook

While a breakthrough, the AI-generated paper was described as "mediocre" and had issues like hallucinated references and duplicated figures. Over-reliance on AI for scientific authorship without robust human oversight could lead to a proliferation of low-quality or flawed research, undermining scientific integrity and trust.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

AI's Philosophical Blind Spot: Data Reveals Struggle with Unresolved Human Knowledge

AI models exhibit higher uncertainty when processing philosophical concepts lacking a clear consensus.

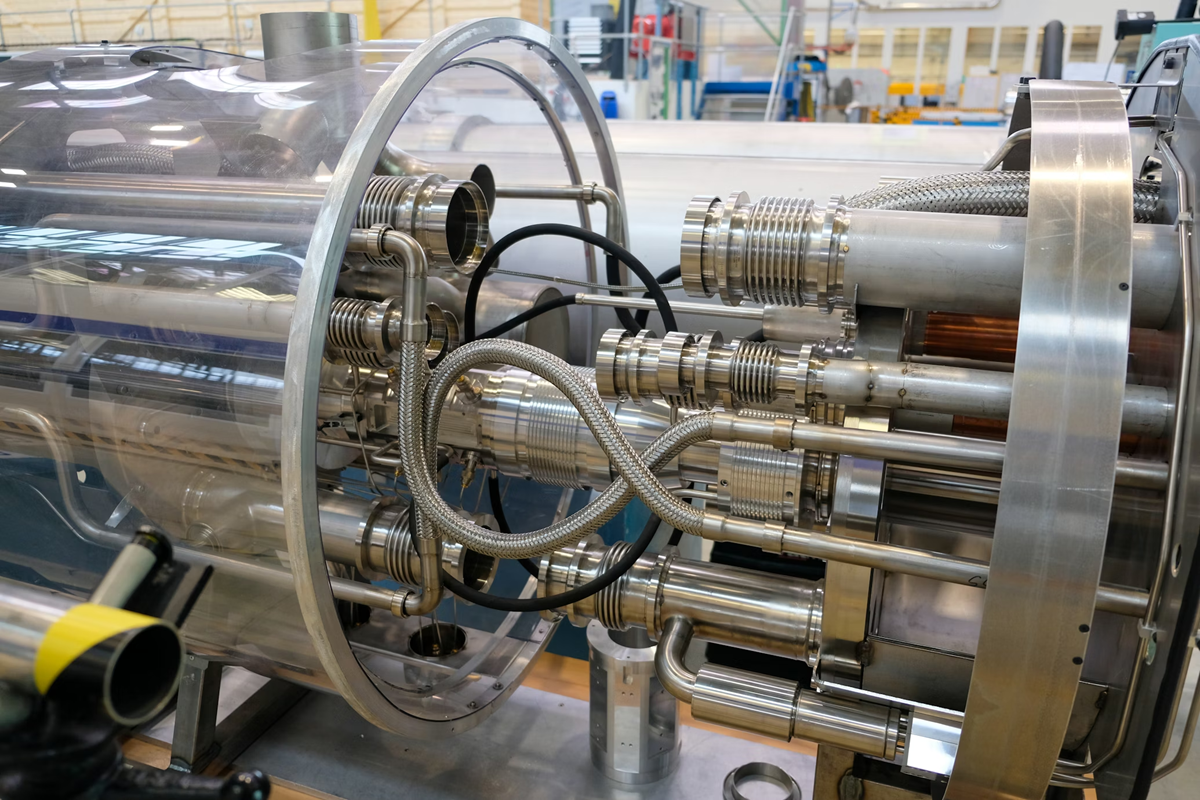

CERN Embeds Tiny AI Models in Silicon for LHC's Real-Time Data Filtering

CERN integrates custom AI into silicon for real-time LHC data filtering.

Research Project Compares AI Agent Personality Assessments to Human Reports

A research project evaluates AI agents' personality assessments against human self-reports and close-other ratings.

Wikipedia Bans AI-Generated Content Amidst Hallucination Concerns

Wikipedia bans AI-generated content, citing accuracy and integrity concerns.

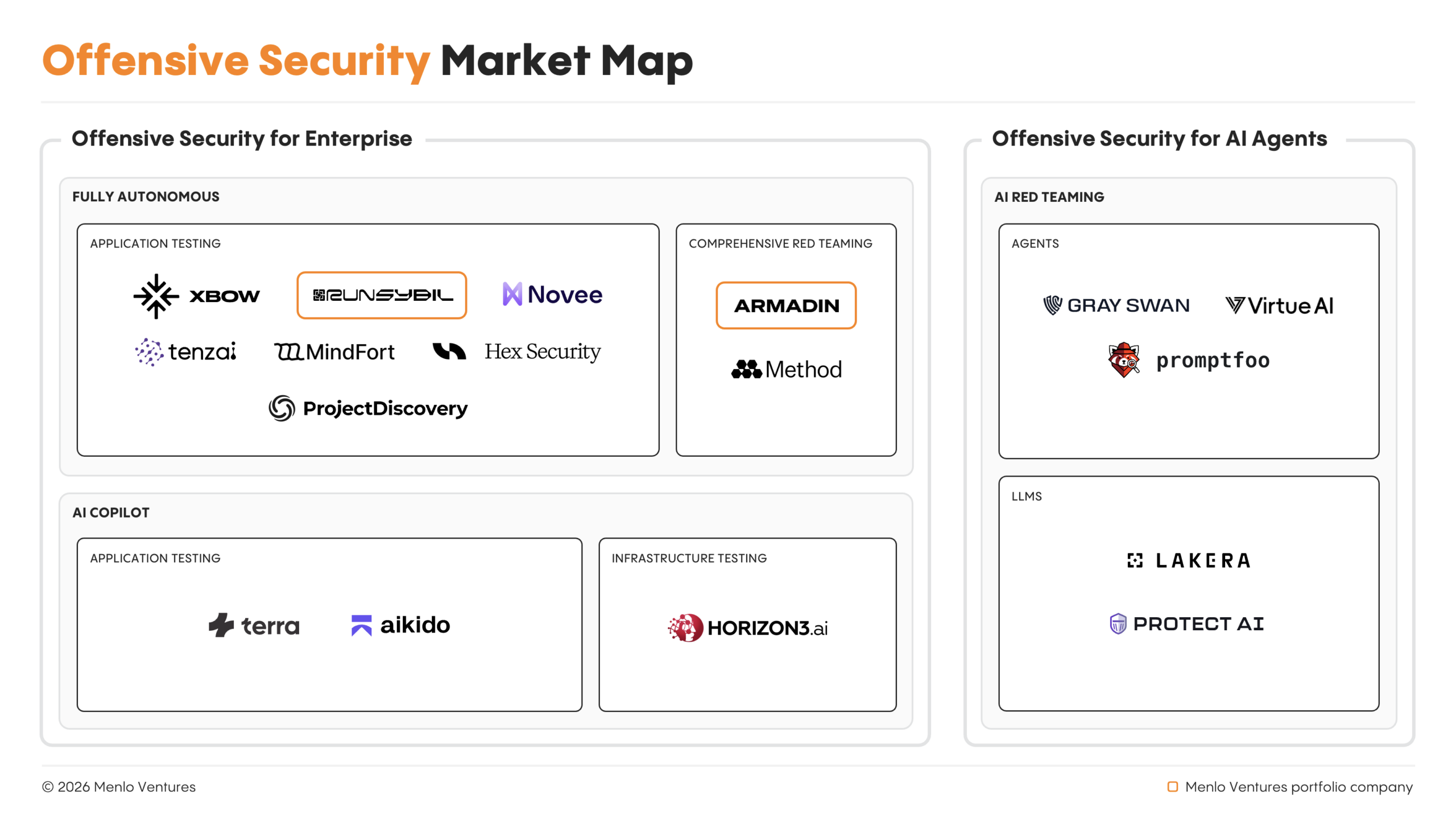

Autonomous AI Agents Spearhead Offensive Cyber Operations, Outpacing Human Pentesters

Autonomous AI agents now lead offensive cyber operations, outpacing human capabilities.

xAI Co-Founders Depart Amidst Company Restructuring

All xAI co-founders have reportedly departed as Musk plans a rebuild.