AI's Philosophical Blind Spot: Data Reveals Struggle with Unresolved Human Knowledge

Sonic Intelligence

The Gist

AI models exhibit higher uncertainty when processing philosophical concepts lacking a clear consensus.

Explain Like I'm Five

"Imagine you're trying to solve a puzzle. If it's a math puzzle, there's usually one right answer, and you know when you've found it. But if it's a puzzle about 'what is happiness?', there's no single right answer, and you could talk about it forever. Computers are like that too; they get confused and keep talking when there's no clear 'right answer' to stop them."

Deep Intelligence Analysis

Empirical data supports this hypothesis, with four small language models (8B parameters) demonstrating significantly higher token counts and cumulative token/second decline when processing philosophical monologues compared to tasks with clear convergence points, such as everyday narration or scientific statements. Crucially, the addition of a context-closing signal to philosophical inputs dramatically reduced token generation (52-59% drop), suggesting AI responds to the structural completeness of an utterance rather than just keywords. Furthermore, prompt-level entropy measurements confirmed philosophical utterances exhibited the highest internal entropy, indicating this struggle is reflected in the model's internal state prior to output. These findings, corroborated across major large-scale models like GPT, Claude, Gemini, and Grok, suggest a pervasive architectural characteristic independent of model scale.

This structural limitation has significant forward-looking implications for AI development. If AI's current architecture is inherently biased towards convergent problems, its application in fields requiring nuanced, open-ended reasoning—such as ethics, creative arts, or even complex strategic planning—will remain constrained. Future research must explore architectures that can robustly model and navigate ambiguity, perhaps by explicitly incorporating mechanisms for representing divergent perspectives or acknowledging the absence of a single 'correct' answer. Overcoming this 'philosophical blind spot' is not merely an academic exercise but a prerequisite for developing AI that can truly engage with the full spectrum of human intellectual and emotional experience.

{"metadata": {"ai_detected": true, "model": "Gemini 2.5 Flash", "label": "EU AI Act Art. 50 Compliant"}}

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

This research suggests AI's limitations may stem from the inherent structure of human knowledge, not just model capacity. Understanding this 'Convergence Point' phenomenon is critical for developing more robust and human-aligned AI, especially in domains requiring nuanced understanding beyond factual recall.

Read Full Story on ZenodoKey Details

- ● AI models generate more tokens and show higher internal uncertainty with philosophical monologues than complex math problems.

- ● The study used four 8B parameter language models (Llama, Mistral, DeepSeek, Qwen3) in a local inference environment.

- ● Adding a context-closing signal to philosophical utterances reduced token counts by 52% (Llama) and 59% (Qwen3).

- ● Prompt-level entropy measurements showed philosophical utterances had the highest entropy (e.g., Llama: 1.6967, Mistral: 1.5169).

- ● The 'Convergence Point' concept defines the presence or absence of a clear answer or logical endpoint in an utterance.

Optimistic Outlook

Identifying that AI struggles with the 'unresolved structure of human knowledge' rather than just complexity opens new avenues for research into how models process ambiguity. Future AI could be designed to better navigate open-ended questions, potentially leading to more sophisticated philosophical or creative applications.

Pessimistic Outlook

If AI's fundamental limitations are tied to the unresolved nature of human knowledge, achieving truly human-like understanding in subjective or philosophical domains may be inherently constrained. This could lead to a persistent gap in AI's ability to engage with the nuances of human experience and ethical dilemmas.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

AI System Authors Peer-Reviewed Scientific Paper

An AI system independently authored a scientific paper that passed peer review.

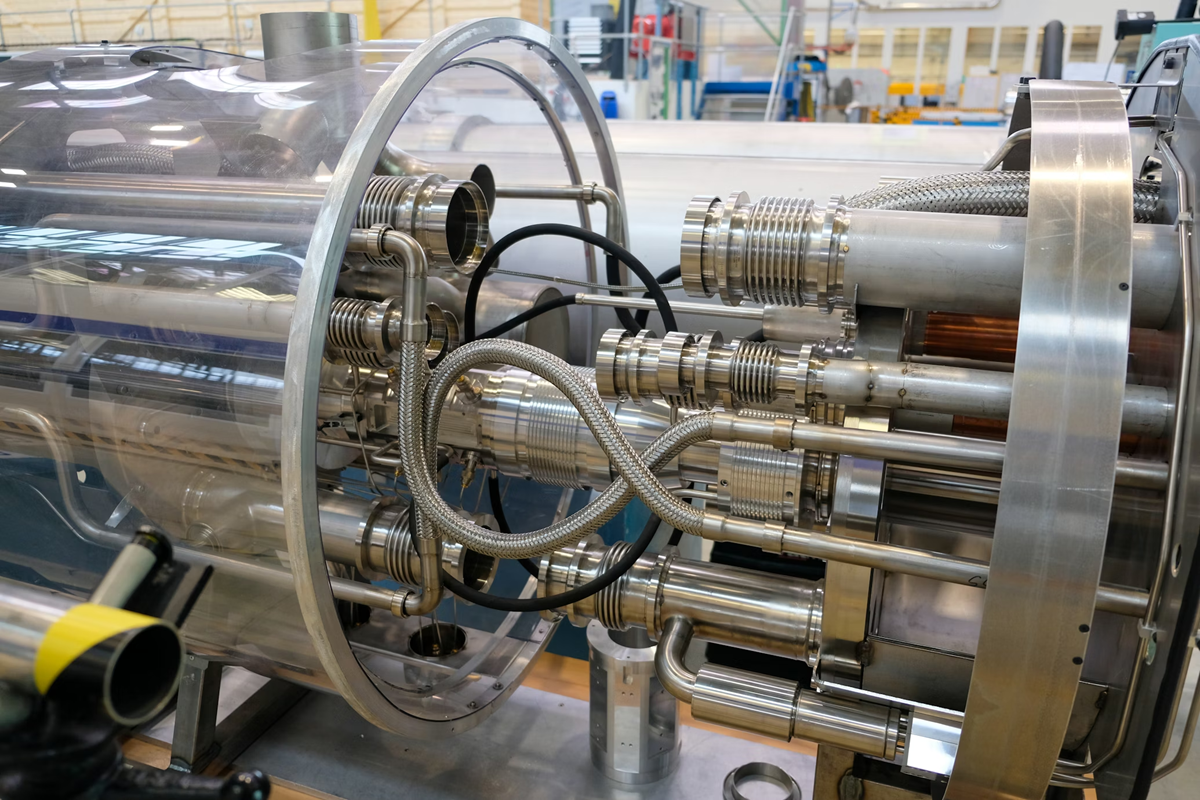

CERN Embeds Tiny AI Models in Silicon for LHC's Real-Time Data Filtering

CERN integrates custom AI into silicon for real-time LHC data filtering.

Research Project Compares AI Agent Personality Assessments to Human Reports

A research project evaluates AI agents' personality assessments against human self-reports and close-other ratings.

Wikipedia Bans AI-Generated Content Amidst Hallucination Concerns

Wikipedia bans AI-generated content, citing accuracy and integrity concerns.

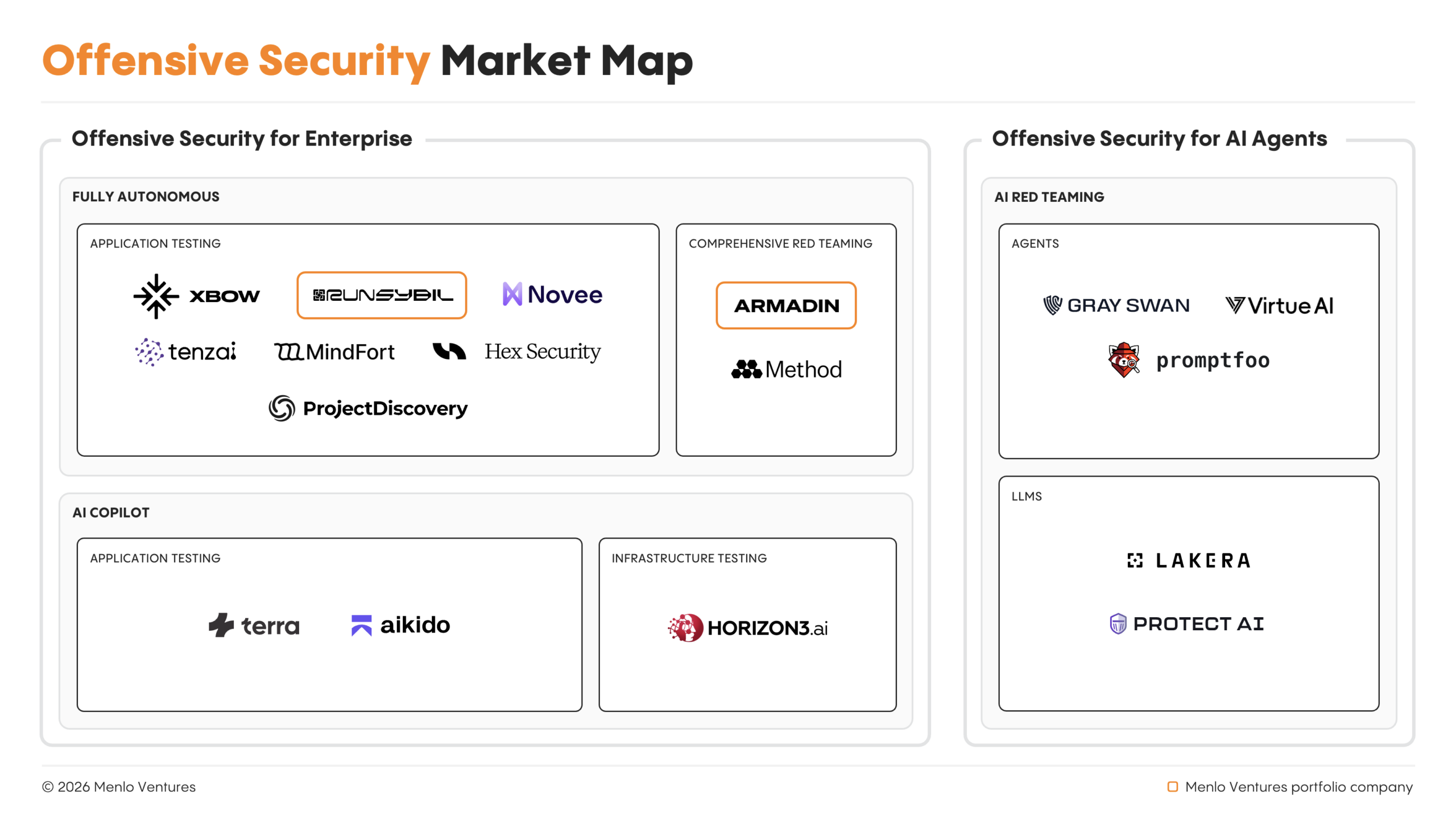

Autonomous AI Agents Spearhead Offensive Cyber Operations, Outpacing Human Pentesters

Autonomous AI agents now lead offensive cyber operations, outpacing human capabilities.

xAI Co-Founders Depart Amidst Company Restructuring

All xAI co-founders have reportedly departed as Musk plans a rebuild.