Claude's Consumer Subscriptions Surge Amid DoD Dispute

Sonic Intelligence

The Gist

Anthropic's Claude paid consumer subscriptions have more than doubled this year.

Explain Like I'm Five

"Imagine a smart talking robot named Claude. Lots of people are now paying to talk to Claude, even more than before! But Claude's company is also having a big argument with the army because the army wants to use Claude for things the company thinks are too dangerous, like making robots fight or watching everyone."

Deep Intelligence Analysis

This consumer adoption data, derived from an analysis of billions of anonymized credit card transactions across approximately 28 million U.S. consumers, offers a granular view of market dynamics. The preference for the $20/month "Pro" tier among new subscribers highlights a willingness to pay for enhanced AI capabilities at an accessible price point. Concurrently, Anthropic's public conflict with the DoD over the use of its AI models for lethal autonomous operations or mass surveillance has thrust the company into a pivotal ethical debate. While the DoD temporarily labeled Anthropic a supply risk, a federal judge has since blocked this designation, underscoring the nascent and contested regulatory environment for advanced AI. This dual narrative of rapid consumer growth and high-stakes ethical confrontation positions Anthropic at a critical juncture.

Looking forward, Anthropic's ability to sustain this consumer momentum while navigating complex regulatory and ethical challenges will be a key determinant of its long-term market position. The company's principled stand against certain military applications could enhance its brand reputation among privacy-conscious users, potentially attracting a distinct segment of the AI market. However, the financial and operational implications of a protracted dispute with a major government entity cannot be understated, especially for its enterprise segment. The broader industry will closely watch how Anthropic balances innovation, market expansion, and its stated ethical framework, as this case could establish precedents for responsible AI development and deployment across the entire sector.

EU AI Act Art. 50 Compliant.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

This surge in consumer adoption for Claude signals a growing competitive landscape in the LLM market, challenging established players. The simultaneous public dispute with the DoD highlights the escalating ethical and regulatory complexities surrounding advanced AI deployment, especially concerning military applications and data privacy.

Read Full Story on TechCrunchKey Details

- ● Anthropic's Claude paid subscriptions more than doubled in the past year.

- ● Consumer paid subscriber growth was in 'record numbers' between January and February.

- ● Majority of new paid subscribers opt for the $20/month 'Pro' tier.

- ● Data is based on billions of anonymized credit card transactions from ~28 million U.S. consumers by Indagari.

- ● Anthropic is involved in a public dispute with the U.S. Department of Defense regarding AI usage for lethal autonomous operations and mass surveillance.

Optimistic Outlook

Increased consumer engagement with Claude could drive further innovation in conversational AI, leading to more robust and user-centric models. Anthropic's stance against certain military uses could also set a precedent for ethical AI development, fostering greater public trust and responsible deployment across the industry.

Pessimistic Outlook

The ongoing conflict with the DoD could significantly impact Anthropic's enterprise business, potentially limiting its market reach and financial stability. Furthermore, intense competition for consumer mindshare might lead to aggressive marketing tactics or feature races that compromise model safety or data privacy in pursuit of growth.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

LLM Value Alignment: Supervised Fine-Tuning Sets Core Ethics, Preference Optimization Struggles to Realign

Supervised fine-tuning primarily establishes LLM values, with subsequent preference optimization having limited realignm...

OpenAI Scraps Sora Amidst Compute Costs and Stiff Competition

OpenAI discontinued its Sora video generation model due to high compute costs and intense market competition.

AI Reverse-Engineers Apollo 11 Code, Challenging Legacy System Limits

AI successfully reverse-engineered 1960s Apollo 11 assembly code, defying legacy system limitations.

AI System Authors Peer-Reviewed Scientific Paper

An AI system independently authored a scientific paper that passed peer review.

Wikipedia Bans AI-Generated Content Amidst Hallucination Concerns

Wikipedia bans AI-generated content, citing accuracy and integrity concerns.

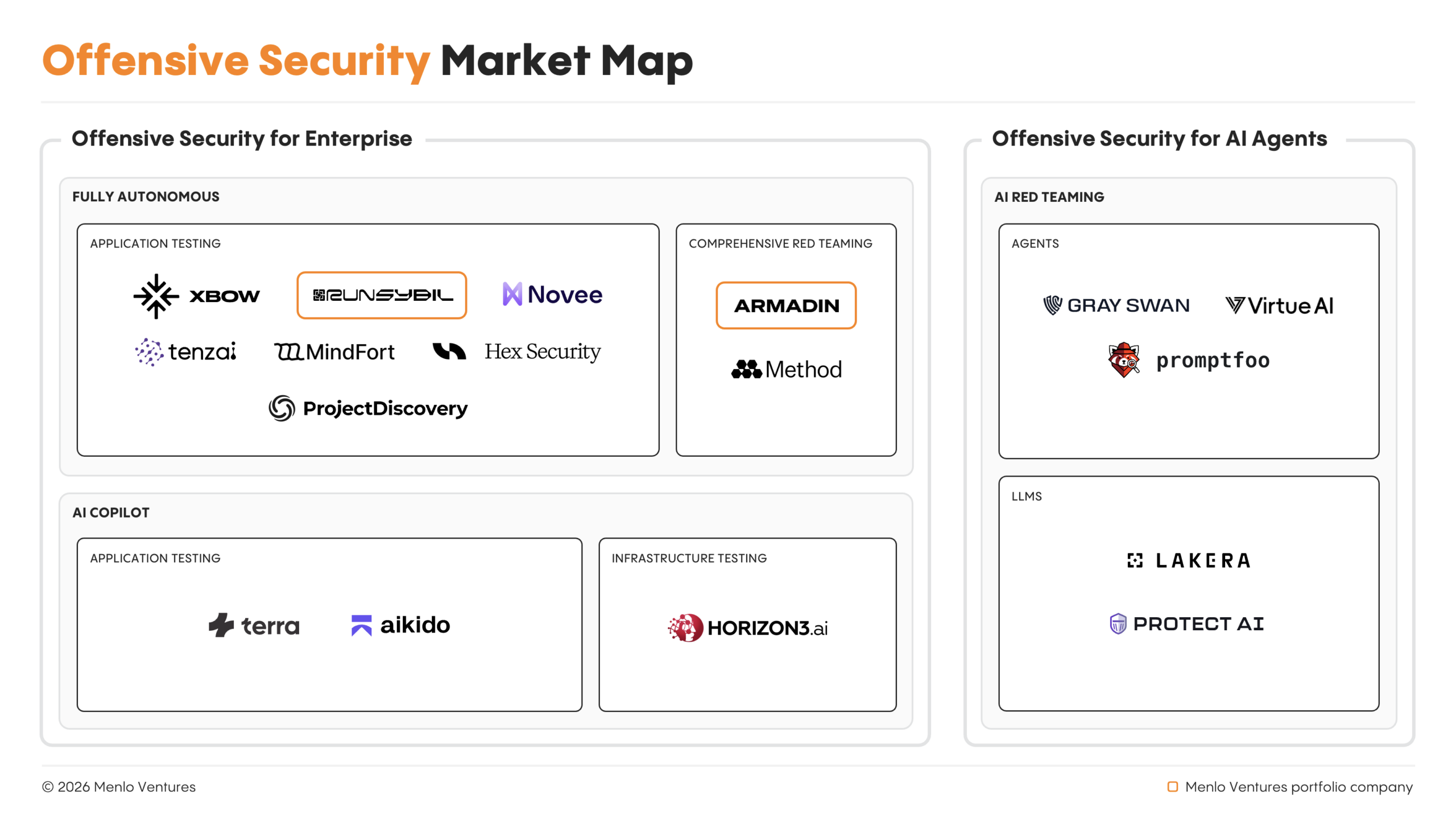

Autonomous AI Agents Spearhead Offensive Cyber Operations, Outpacing Human Pentesters

Autonomous AI agents now lead offensive cyber operations, outpacing human capabilities.