LLM Value Alignment: Supervised Fine-Tuning Sets Core Ethics, Preference Optimization Struggles to Realign

Sonic Intelligence

The Gist

Supervised fine-tuning primarily establishes LLM values, with subsequent preference optimization having limited realignment impact.

Explain Like I'm Five

"Imagine teaching a robot to be good. If you teach it right from the very beginning (like when it's learning its first words), it learns what's good and bad really well. But if you try to change its mind much later, after it's already learned a lot, it's much harder to make it change its core ideas about what's right. This study says it's super important to teach the robot good values early on!"

Deep Intelligence Analysis

Experiments conducted with Llama-3 and Qwen-3 models of varying scales, utilizing popular SFT and preference optimization datasets and algorithms, provided the empirical basis for these conclusions. The study meticulously disentangled the effects of different post-training algorithms and datasets, measuring both the magnitude and timing of 'value drifts.' A key observation was that while the SFT phase generally establishes a model's core values, later preference optimization rarely achieves substantial re-alignment. Furthermore, the research demonstrated that even when preference data is held constant, different preference optimization algorithms yield distinct value alignment outcomes, highlighting the algorithmic sensitivity of this process. The use of a synthetic preference dataset allowed for controlled manipulation of values, reinforcing the robustness of these findings.

The implications for AI development are profound. If SFT is the primary determinant of an LLM's value system, then the quality and ethical framing of SFT datasets become paramount. Developers must prioritize value alignment from the earliest stages of model training, investing heavily in the curation of diverse, representative, and ethically sound SFT data. Relying on preference optimization as a sole or primary corrective mechanism appears insufficient. This necessitates a shift in focus towards 'value-aware' pre-training and SFT, ensuring that models are imbued with desired ethical principles from their inception, rather than attempting to retrofit them later. This insight is crucial for building trustworthy AI systems that genuinely reflect human societal values.

{"metadata": {"ai_detected": true, "model": "Gemini 2.5 Flash", "label": "EU AI Act Art. 50 Compliant"}}

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

Understanding when and how LLMs acquire human values is critical for developing ethically aligned AI. This research highlights the outsized importance of the SFT phase, suggesting that value alignment must be a primary consideration early in the post-training pipeline, rather than relying solely on later preference optimization.

Read Full Story on ArXiv ResearchKey Details

- ● The study investigates how value alignment arises during LLM post-training, disentangling algorithms and datasets.

- ● Experiments used Llama-3 and Qwen-3 models of various sizes.

- ● Supervised Fine-Tuning (SFT) is identified as the phase where a model's values are generally established.

- ● Subsequent preference optimization rarely re-aligns these established values.

- ● Different preference optimization algorithms lead to different value alignment outcomes, even with constant preference data.

- ● A synthetic preference dataset was used for controlled manipulation of values.

Optimistic Outlook

By pinpointing SFT as the critical phase for value establishment, developers can focus resources on curating high-quality, value-aligned SFT datasets. This targeted approach could lead to more robust and predictable ethical behavior in LLMs, improving trust and societal integration.

Pessimistic Outlook

The finding that preference optimization struggles to re-align values established during SFT suggests that early biases or misalignments may be difficult to correct downstream. This could lead to entrenched ethical issues in models, requiring extensive and costly re-training or making fine-tuning for specific value systems less effective.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Claude's Consumer Subscriptions Surge Amid DoD Dispute

Anthropic's Claude paid consumer subscriptions have more than doubled this year.

OpenAI Scraps Sora Amidst Compute Costs and Stiff Competition

OpenAI discontinued its Sora video generation model due to high compute costs and intense market competition.

AI Reverse-Engineers Apollo 11 Code, Challenging Legacy System Limits

AI successfully reverse-engineered 1960s Apollo 11 assembly code, defying legacy system limitations.

AI System Authors Peer-Reviewed Scientific Paper

An AI system independently authored a scientific paper that passed peer review.

Wikipedia Bans AI-Generated Content Amidst Hallucination Concerns

Wikipedia bans AI-generated content, citing accuracy and integrity concerns.

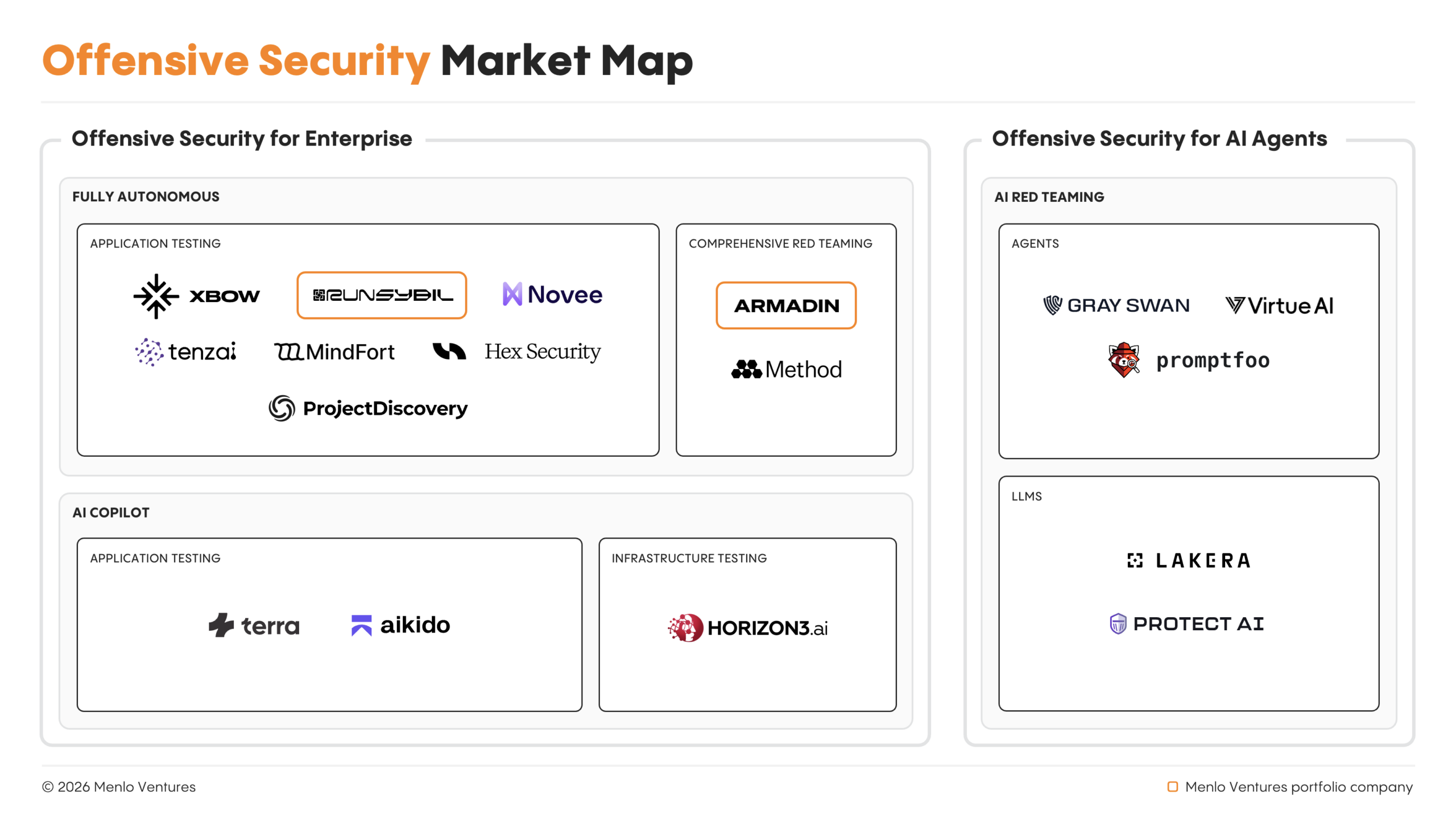

Autonomous AI Agents Spearhead Offensive Cyber Operations, Outpacing Human Pentesters

Autonomous AI agents now lead offensive cyber operations, outpacing human capabilities.